In the Race to Lower Global Emissions, the Middle East Is Certainly Not Helping In the Race to Lower Global Emissions, the Middle East Is Certainly Not Helping

Some of the countries facing the biggest threat from the climate crisis seem all too intent on making it far worse.

The Covid Revisionists Are Endangering Us All The Covid Revisionists Are Endangering Us All

As the possibility of a new pandemic looms, influential figures are telling us we shouldn’t have been so worried about the last one. This spells trouble.

How New Title IX Rules Leave Sexual Assault Survivors in the Lurch How New Title IX Rules Leave Sexual Assault Survivors in the Lurch

The Biden administration’s updates to the regulations have laudable aims, but one blind spot leaves victims vulnerable to retaliatory lawsuits.

Want to Fight Mass Incarceration? Start With Your Local Jail Want to Fight Mass Incarceration? Start With Your Local Jail

A new collection of essays from academics and activists devoted to prison abolition focuses on the quiet but rapid expansion of the carceral system in small towns and municipaliti…

Latest

The Supreme Court’s 5 Male Justices Are Fully in the Tank for Trump The Supreme Court’s 5 Male Justices Are Fully in the Tank for Trump

Apr 25, 2024 / Elie Mystal

Fellow Freelancers: Don’t Marry Rich, Organize! Fellow Freelancers: Don’t Marry Rich, Organize!

Apr 25, 2024 / Amy Littlefield

The Religious Foundations of Transhumanism The Religious Foundations of Transhumanism

Apr 25, 2024 / Audio

Genocide in Real Time Genocide in Real Time

Apr 25, 2024 / Ronald Grigor Suny

Pecker Exposes Lengths Taken to Please Trump Pecker Exposes Lengths Taken to Please Trump

Apr 24, 2024 / Chris Lehmann

The “Troublemakers” of the Labor Movement Gather in Chicago The “Troublemakers” of the Labor Movement Gather in Chicago

Apr 24, 2024 / Ella Fanger

Nation Voices

“swipe left below to view more authors”Swipe →Israel-Gaza War

“The Bulldozer Kept Coming”: A Girl Stares Down Death in Gaza “The Bulldozer Kept Coming”: A Girl Stares Down Death in Gaza

The extraordinary story of a 14-year-old, her mother, and what happened when the Israeli military came to destroy their house.

Genocide in Real Time Genocide in Real Time

As an American, I share the deep sorrow over my country’s complicity in this horrific crime against humanity.

Israel’s Attacks on Gaza Are Not “Mistakes.” They’re Crimes. Israel’s Attacks on Gaza Are Not “Mistakes.” They’re Crimes.

The political and media class is doing what it always does with the US and its allies: trying to frame deliberate atrocities as tragic mishaps.

Popular

“swipe left below to view more authors”Swipe →-

The Supreme Court’s 5 Male Justices Are Fully in the Tank for Trump The Supreme Court’s 5 Male Justices Are Fully in the Tank for Trump

-

“The Bulldozer Kept Coming”: A Girl Stares Down Death in Gaza “The Bulldozer Kept Coming”: A Girl Stares Down Death in Gaza

-

The Covid Revisionists Are Endangering Us All The Covid Revisionists Are Endangering Us All

-

Fellow Freelancers: Don’t Marry Rich, Organize! Fellow Freelancers: Don’t Marry Rich, Organize!

From the Archive

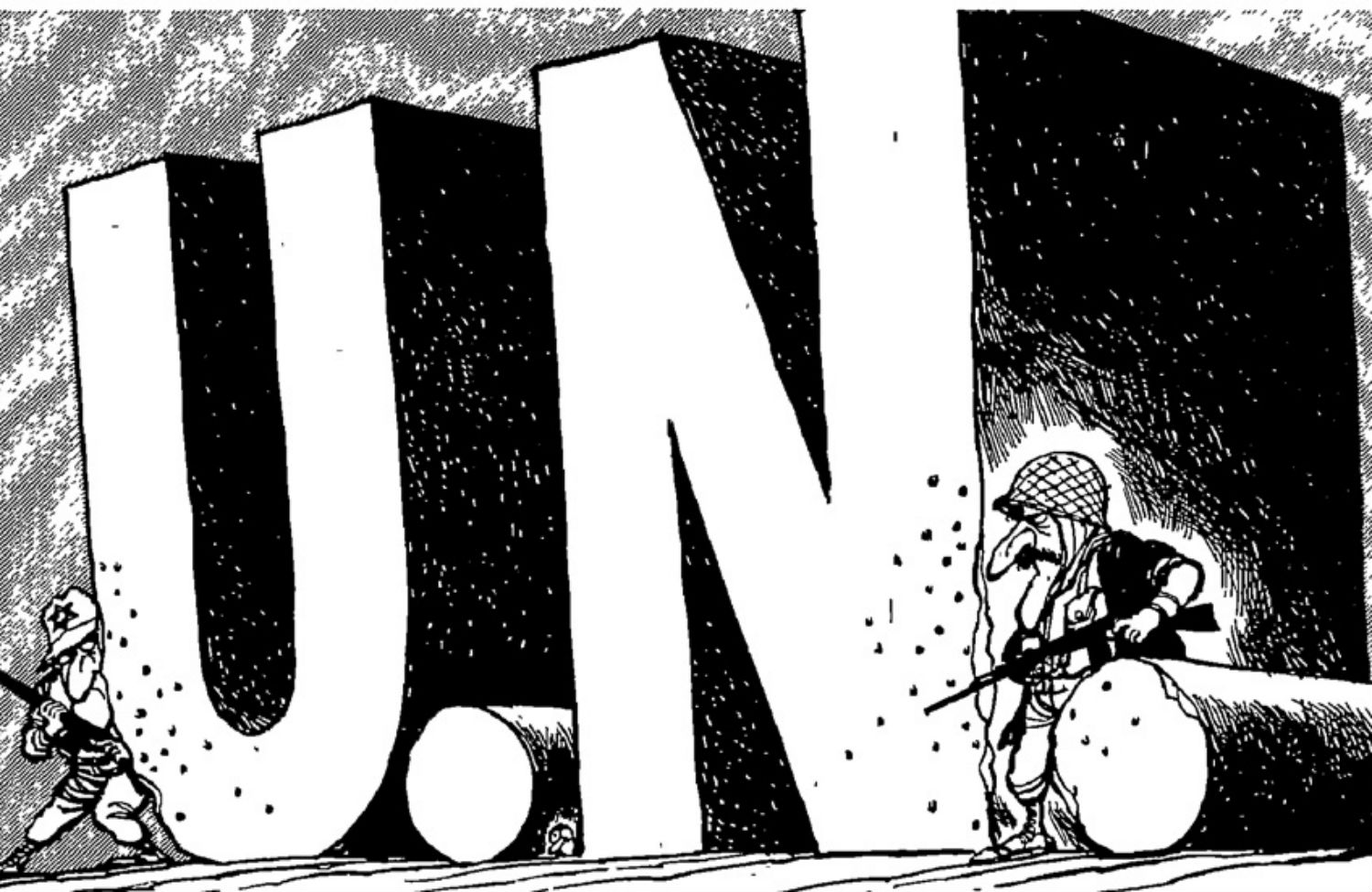

July 1, 2014: We Denounced the Israel Lobby Back in 1976 We Denounced the Israel Lobby Back in 1976

Early warnings of an American policy in thrall to the Israeli right.

Politics

Summer Lee Proves That “Opposing Genocide Is Good Politics and Good Policy” Summer Lee Proves That “Opposing Genocide Is Good Politics and Good Policy”

Last week, the Pennsylvania representative voted against unconditional military aid for Israel. This week, she won what was supposed to be a tough primary by an overwhelming margi…

Is Donald Trump on Drugs? If Not, He Should Be. Is Donald Trump on Drugs? If Not, He Should Be.

His true addiction explains the president’s doziness.

The House Foreign Aid Bills Have Put a Target on Mike Johnson’s Back The House Foreign Aid Bills Have Put a Target on Mike Johnson’s Back

After a vote in favor of sending $95 billion to Ukraine, Israel, and Taiwan passed, far right Republicans are threatening a motion to vacate the speaker of the house.

Books & the Arts

Want to Fight Mass Incarceration? Start With Your Local Jail Want to Fight Mass Incarceration? Start With Your Local Jail

A new collection of essays from academics and activists devoted to prison abolition focuses on the quiet but rapid expansion of the carceral system in small towns and municipaliti…

Is Comedy Really an Art? Is Comedy Really an Art?

A history of comedy’s last three decades of pop culture dominance argues that it is among the consequential American art forms.

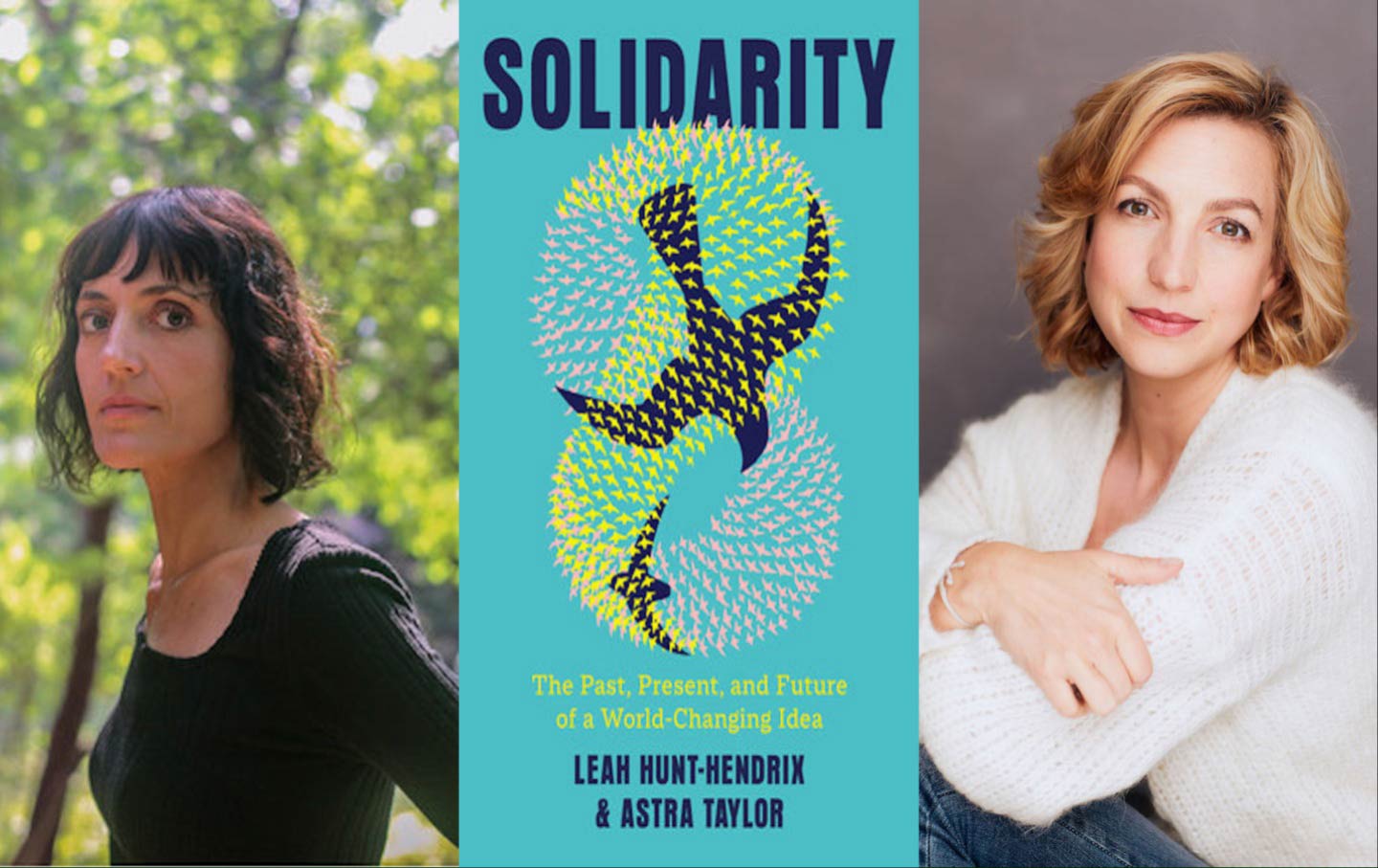

Talking “Solidarity” With Astra Taylor and Leah Hunt-Hendrix Talking “Solidarity” With Astra Taylor and Leah Hunt-Hendrix

A conversation with the activists and writers about their wide-ranging history of the politics of the common good and togetherness.

Features

“swipe left below to view more features”Swipe →Latest Podcasts

The Nation produces various podcasts, including Contempt of Court with Elie Mystal, Start Making Sense with Jon Wiener, Time of Monsters with Jeet Heer, and Edge of Sports with Dave Zirin.

SubscribeThe Religious Foundations of Transhumanism The Religious Foundations of Transhumanism

Podcast / Tech Won’t Save Us

A Better Two-State Solution—Plus, the UAW’s Victory A Better Two-State Solution—Plus, the UAW’s Victory

Podcast / Start Making Sense

Did Fascism Happen Here? Did Fascism Happen Here?

Podcast / American Prestige