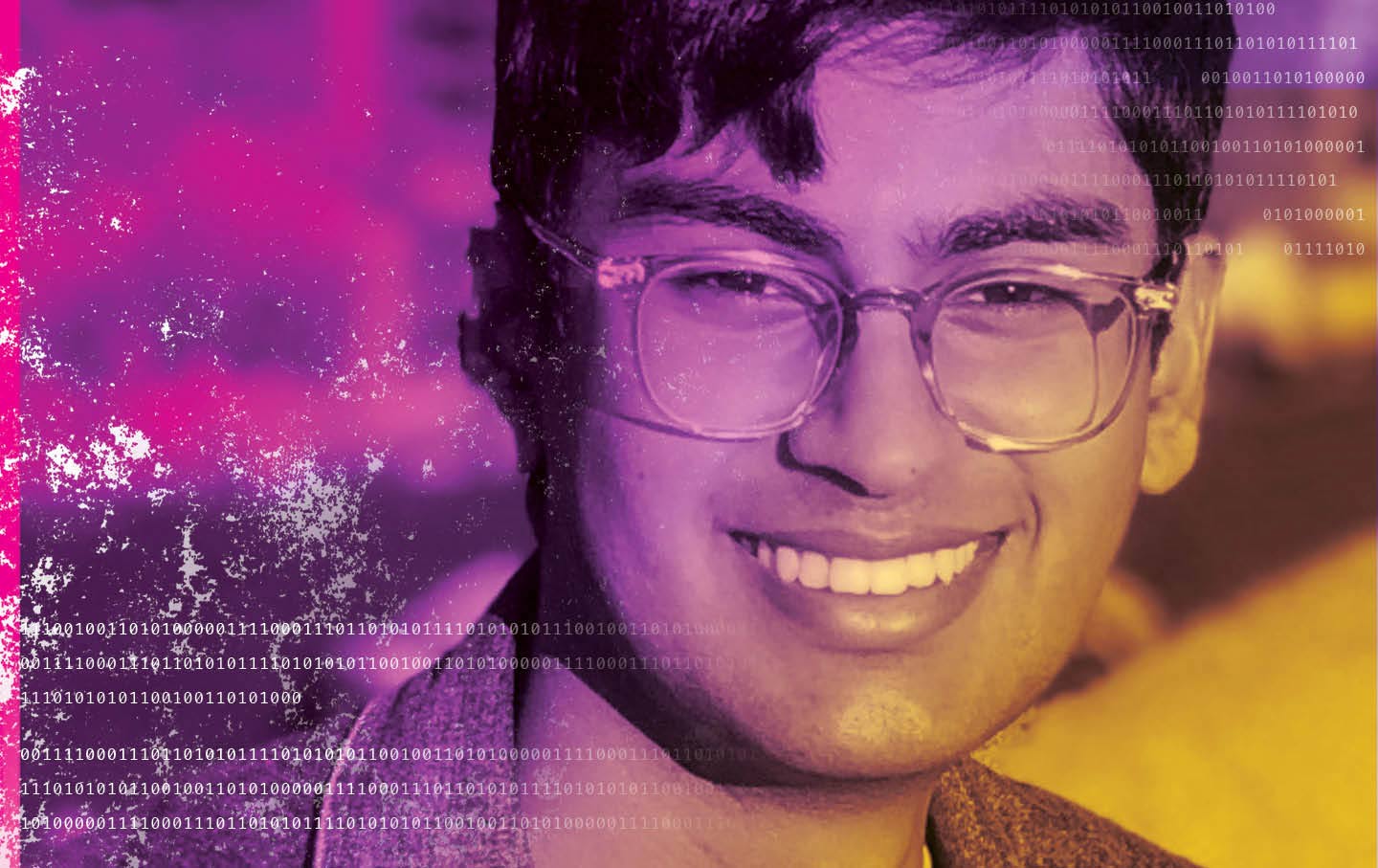

The Death of an AI Whistleblower

Suchir Balaji sought to expose OpenAI’s data abuses. Did it come at the expense of his own life?

On October 23, 2024, Suchir Balaji’s face appeared, freshly shaved, boyish but serious, partially shadowed, in The New York Times as he announced himself as a whistleblower against one of the most powerful technology companies in the world. Setting off a political earthquake in the AI industry, Balaji claimed that OpenAI, the highly touted artificial-intelligence start-up where he’d worked as a researcher for almost four years, had broken copyright laws by absorbing practically all available data on the Internet for its models. ChatGPT, the company’s mega-popular AI chatbot—as well as, potentially, its competitors—had apparently been built on a foundation of illegal activity on a mass scale. Balaji presented his supporting argument in a mathematically minded paper on his personal website.

“If you believe what I believe, you have to just leave the company,” Balaji told the Times’ Cade Metz.

OpenAI had a lot on the line, and so did Balaji. Other researchers had resigned from their positions and issued warnings about the dangers of AI going rogue in fantastical Terminator-like scenarios. But as the Times noted, Balaji was one of the few industry professionals flagging the damage that the technology was doing right now. A 26-year-old former top student at Berkeley, he was already a veteran of several AI labs and had a patent under his belt. His parents called him a humble prodigy who had taught himself programming at age 11. His future seemed boundless.

Balaji didn’t live long enough to tell his full story.

A month after the Times interview was published, Balaji was found dead in his San Francisco apartment with a gunshot wound to the head. In that brief interval, he had gone from obscure AI researcher to high-profile whistleblower under severe pressure as he defied an industry that was collectively engaged in the biggest speculative bet in American business history.

Suchir Balaji’s time in the public eye amounted to one newspaper interview, but his afterlife as a martyr has become increasingly complex, his legacy contested terrain. The San Francisco medical examiner ruled his death a suicide, but his parents, Poornima Ramarao and Balaji Ramamurthy, have repeatedly stated that their son was murdered for what he knew. The dispute likely won’t be resolved to the satisfaction of Balaji’s parents or critics of the burgeoning AI industry anytime soon. Still, it tells us a great deal about the crisis of accountability in a sector of the AI industry that has come to dominate our investment economy and dramatically alter our daily lives. The aftermath of Balaji’s death also reveals a profound and troubling failure to protect and support AI whistleblowers, and corporate whistleblowers in general.

For the Times, Balaji’s story was more than an impressive scoop. It was also a key element in its copyright-infringement lawsuit against the massive AI start-up, New York Times v. OpenAI. If the paper were to succeed in the suit, in which Balaji was listed as a potential witness, it could win a multibillion-dollar judgment and open the door to numerous similar actions against OpenAI and its competitors. From the vantage point of an industry built on the ruthless consumption of any and all data, the potential damage might be apocalyptic.

Balaji’s grieving parents have highlighted the murky circumstances surrounding his death, hiring numerous forensic consultants and lawyers in their quest to prove that their son was murdered. Some details remain disputed—Balaji’s parents claim that the crime scene was poorly tended, that there is evidence from blood and hair collected at the scene that indicates a possible struggle, and that he was shot at an angle that is inconsistent with suicide.

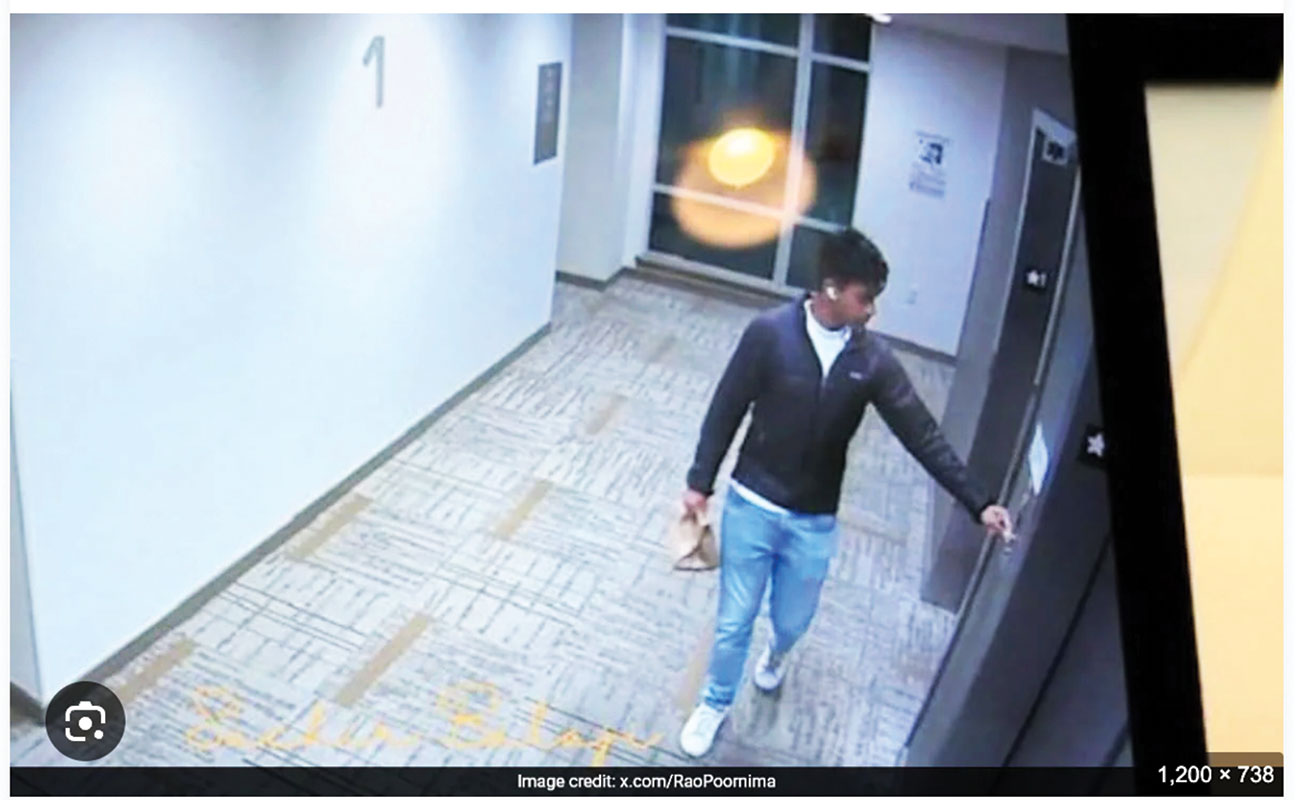

Overall, the picture is mixed. Balaji left no suicide note. His apartment was dead-bolted from the inside, and police reported there was no sign of forced entry. The day before his death, after returning from a trip with friends, he had spoken with his father on the phone and seemed happy. Security footage recorded by his building in the hours before his death shows him walking into his apartment with a takeout order. He owned a gun. In some news reports, friends described him as somewhat secretive. The medical examiner’s report found GHB in his system. While the so-called date-rape drug can be used recreationally or as a disabling agent, GHB can also appear naturally during a body’s decomposition.

The primary demand from Balaji’s parents has been for the FBI to look into their son’s death. “We know there was foul play from many factors, many data points,” Ramarao said at a public vigil in December 2024. Indicating that her son represented a threat to the AI industry, she asked for a “thorough investigation.”

For a time, Balaji’s family found support across the political spectrum. In January 2025, after a news report stated that the San Francisco Police Department (SFPD) was still investigating Balaji’s death, the left-wing San Francisco Supervisor Jackie Fielder posted on X, “I am relieved to see this case reopened. Friends and family of Suchir are welcome to get in touch with my office.” At the same time, Democratic Congressman Ro Khanna addressed Ramarao directly on X, offering his help. He later told The Mercury News that he’d had a conversation with Ramarao that led him to “believe her that there are unanswered questions.”

But in February, the SFPD closed its investigation into Balaji’s death, pronouncing it a suicide, and Democratic politicians seemed to lose interest in the case. Neither Khanna’s nor Fielder’s office responded to inquiries about their past support for a new investigation or their contact with Balaji’s family.

Ramarao has been a particularly vocal advocate for her son and has aggressively challenged officials for not doing more to investigate his death. She has spoken frequently at public events and in lengthy interviews on podcasts, YouTube shows, and Indian media. In January 2025, when Bay Area public officials were calling for a deeper investigation into Balaji’s death, Ramarao sat down for a 66-minute interview with Tucker Carlson, during which she said that her son was murdered.

Ramamurthy has offered fewer public remarks, though he is sometimes seen alongside his wife, appearing stoically subdued. At the December 2024 public memorial, he expressed concern that the United States was becoming a mafia state. “Enterprises are becoming very greedy, and compassion and caring have taken a backseat,” he said. Under the banner of the Justice for Suchir Movement, Ramamurthy has posted online about the need for “ethical AI,” and he’s started a conference, the Suchir Balaji Memorial Summit. In his X profile, he identifies himself as “OpenAI Whistleblower Suchir’s Dad,” and he’s made numerous posts with the hashtag #justiceforsuchir that point out alleged issues at the crime scene, such as the blood-spatter patterns, a disabled surveillance camera, and a window through which an assassin could have entered. His posts depict his son as a thwarted genius, the “robinhood of AI,” who called for a more humane AI revolution and was murdered for his efforts.

In their desperation to find an alternate explanation for their son’s death, Ramarao and Ramamurthy have cycled through a half-dozen attorneys and several outside investigators. They filed and then dropped a lawsuit against the SFPD. Meanwhile, they’ve gone after OpenAI on many fronts, including supporting a ballot measure that opposes its planned conversion into a for-profit company. They’ve also found some surprising allies.

Ramarao and Ramamurthy have received extensive support on the political right, which has occasionally asked the right questions, even if for the wrong reasons. But whatever genuine suspicions might surround the case, Balaji’s death has unfortunately become fodder in the political battles that are consuming the tech industry. OpenAI’s CEO, Sam Altman, and Elon Musk, who was one of the company’s cofounders, have become mortal enemies. The two are embroiled in a lawsuit based on Musk’s belief that OpenAI’s transformation into a for-profit structure will contribute to the untrammeled development of artificial general intelligence (AGI), which may doom humanity. In the meantime, Musk’s company xAI hosts Grok, an anything-goes chatbot that has helped enable a global proliferation of nonconsensual pedophilic imagery; it has also parroted Musk’s racist beliefs and even praised Hitler. Musk will apparently do anything to crush Altman, and that includes accusing him of orchestrating a murder.

In September of last year, a squeamish-looking Altman gave an exceedingly awkward interview to Tucker Carlson. Carlson called Balaji’s death “definitely murder” as Altman sweated his way through some words of sympathy for his former employee’s family. The grim exchange, in which Altman seemed oily and evasive, lit up right-wing social networks.

“He was murdered,” Musk declared on X.

The public speculation surrounding Balaji’s death went into overdrive. In the absence of an authoritative investigation, the Balaji case has become the subject of feverish conjecture by right-wing podcasters, tech moguls, and social-media conspiracy theorists, who, unlike many on the liberal left, are willing to entertain sinister ideas about the tech industry and corporate power. While they eagerly serve as ideological foot soldiers in Musk’s online army, the virtual shots they fire at OpenAI and Altman reflect a nascent public disgust with tech—one that is clearly grounded in issues of class, even if it often takes the form of paranoid murder fantasies.

Balaji’s parents have little choice but to embrace those who are willing to grant them attention and entertain their questions about their son’s death. They have repeatedly posted on X asking for help from Musk and MAGA politicians like Vivek Ramaswamy. When Carlson offered Ramarao access to a massive audience, she accepted.

Ramarao declined to speak with me when I reached out earlier this year; Ramamurthy spoke with me briefly on the phone, then hung up and never called back. Later, Ramarao e-mailed me, writing, “When we are pushed to give evidence, it doesn’t seem safe to get engaged. We don’t know who is behind this. So please give us time and space. Unless introduced by someone we trust, we won’t engage.”

Using the media to litigate the circumstances of Balaji’s death is inherently fraught. Leftists and liberals who are inclined to distrust tech moguls and the police might be attracted to the tale of a ruthless company that is willing to kill a critic. But among those Democrats who once offered the family public support, Balaji’s story seems to have faded from their concern, consigned to the realm of misinformation and right-wing conspiracism—when it’s really about an isolated whistleblower challenging corporate malfeasance at the highest levels.

For the online right, the story of Balaji’s death offers all the proper villains, including a shifty gay tech CEO already locked in combat with their hero, Elon Musk, the world’s richest fascist. In their narrative, “Altman killed Balaji” has been memed into being, proven through the low-resolution photographs and heart-rending parental statements percolating through the right-wing-media ecosystem—all of it approved and boosted by Musk himself. There’s even a memecoin (a cryptocurrency token) named after Balaji; its maker claims to be supporting the family’s cause.

With every opportunity the right gives Balaji’s family to press their case, the search for justice seems to slip deeper into the political swamp—into the confusion and uncertainty that then gets amplified by yet another investigator producing yet another report dissenting from the SFPD’s official line.

“The only person in his life Suchir was ever not happy about was Sam Altman,” Ramarao told the MAGA-affiliated podcaster Patrick Bet-David, who has 2.89 million subscribers on YouTube. “So Suchir knew something.”

“There’s an AI war going on,” Bet-David added, characterizing Balaji’s death as practically a gangland killing.

With his death suffused in rumors, misinformation, and his parents’ righteous grief—all stirred in the pot of the world’s richest troll—Suchir Balaji has become the haunted face of tech whistleblowing and the AI boom. But his death reflects a wider dysfunction in American industry, where, as once-durable liberal institutions crumble, corporate and government power becomes unaccountable, synonymous with corruption and criminality. The risk goes beyond one company, industry, or complaint; Balaji’s story fits into the broader saga of whistleblowers who face daunting odds against proving their claims. Whistleblowing is a solitary, psychically dangerous pursuit, and more would-be truth-tellers have succumbed to the malign forces marshaled against them—gaslighting, intimidation, legal threats, financial pressure—than to a hit man’s bullet.

On the morning of March 9, 2024, John Barnett, a whistleblower who had worked on the 787 Dreamliner aircraft at Boeing, was found dead of a gunshot wound in his car outside a hotel in Charleston, South Carolina. Barnett had spent the previous two days testifying against Boeing, alleging that the company had used substandard parts while building the 787 and ignored safety protocols, and he was scheduled for more testimony that day. His death immediately provoked widespread speculation that he’d been murdered. But the physical evidence, comments from his family, and Barnett’s lengthy suicide note indicate that he likely killed himself.

Barnett spent the last night of his life in his truck, writing about his fury and desperation in a sprawling, chaotic letter that was found at his side. The text runs at all angles; a reader has to turn some pages around to make out the words. But they are clearly the final thoughts of a profoundly depressed man saying that he could no longer carry on but wanted his family and friends to know that he was at peace.

“America, come together or die!!” Barnett wrote on one page. “I pray the motherfuckers that destroyed my life pay!!! I pray Boeing pays!!!”

The same page contains a remark scrawled sideways between the lines about Boeing, and it says as much about Suchir Balaji’s ordeal and those of so many others as it does about Barnett’s. “The entire system for whistleblower protection is fucked up too!” Barnett wrote.

Mary Inman, an attorney at the law firm Whistleblower Partners, has the same concern. She’s a cofounder of an initiative called Psst.org, which aims to encourage action by bringing together whistleblowers from the same company or institution. Despite the canonization of whistleblowers as lone heroes in movies, many corporate and government scandals involve more than one person sounding the alarm, with potentially different fates befalling them. Collaboration and mutual support can be crucial.

“There’s a first-mover problem with whistleblowing, particularly in AI, where the stakes are so high,” Inman said. Psst.org offers an “information escrow” service, a kind of digital lockbox where someone can submit a complaint or memo about wrongdoing. Whistleblowers can use the service if they have sensitive information but don’t feel comfortable raising the issue on their own. If multiple people from the same institution submit information, the service can bring them together. The organization also offers other forms of support, such as media training and connections to therapists.

“We want to disrupt and change how whistleblowing happens and make it a collective act,” Inman said, pointing again to OpenAI, where months before Balaji came forward, a group of researchers resigned together, criticizing the company’s unsafe practices. “And in so doing, we’ll be able to bring more people forward.”

The AI industry is lightly regulated, with fewer safeguards to protect the public’s interests than more established fields. Backed by the tech industry, President Donald Trump’s administration has attempted—first via legislation, then through an executive order—to prohibit individual states from instituting their own AI regulations. Inside these companies, there are enormous financial incentives to say nothing, and many AI professionals subscribe to an austere, almost cultish faith in the world-changing power of artificial general intelligence.

Sophie Luskin, a researcher at the Princeton Center for Information Technology Policy, said that the absence of any legislation that applies specifically to whistleblowers in the AI industry is a major problem. “With employees in the AI sector, they find their rights and protections are particularly unclear and confusing,” she said.

Will they be protected, for example, if they report potential harms or ones that haven’t happened yet? What about something that might harm the public but doesn’t violate the law, such as the information related to Secretary of War Pete Hegseth’s pressure campaign against the AI company Anthropic for refusing to allow its software to be used for autonomous weapons and other lethal purposes?

The bipartisan AI Whistleblower Protection Act, sponsored in May 2025 by Senators Chuck Grassley (R-IA) and Amy Klobuchar (D-MN), among others, was supposed to fill in these gaps, codifying both the kinds of harms that AI whistleblowers might report and the protections they should be afforded. The act “has very good bones that have strong precedent in other existing laws,” Luskin said. But it has been stuck in committee for nearly a year.

“We really miss out on the ability to hold these companies accountable when we don’t have effective and comprehensive whistleblower protections,” Luskin said. “People who work at these companies know what’s going on.”

Now, as Balaji’s story lapses into myth, there remains little accountability for his death—and for the potential crimes that he sacrificed so much to expose. OpenAI, which has expressed sympathy over Balaji’s death, remains the industry’s hottest start-up and is currently seeking up to $100 billion in investment capital. Lawsuits and whistleblower complaints seeking to publicize alleged abuses at the company—including one submitted to the SEC regarding alleged violations of securities laws—have done little to stem its rise.

Perhaps this is the inevitable outcome of actions undertaken by isolated, principled individuals trying to slow the freight train of highly leveraged technological innovation. At the December 2024 public memorial for Balaji, when his mother called for an FBI investigation into her son’s death, Ramarao offered a spirited tribute. She explained to the crowd that her child had followed his sense of right and wrong to the only place it could take him:

The most important thing is he was a witness. He was a witness for a critical case. And even that’s not taken into account, and that’s the most frustrating [thing] for us. What’s the value of speaking truth if by speaking truth someone could lose their life? Do we never encourage our children to stand up for truth? Is there any protection? So whistleblowers’ lives don’t matter? We’re ready to lose them. I don’t know how I could have saved my son by teaching him to tell lies. The ethics with which I raised him took his life today.

Ramarao’s words reflect more than the loss suffered by a grieving parent. As AI rampages through so many sectors of American life and continues to disfigure the basic foundations of creative and intellectual labor, the issues raised by Suchir Balaji are more urgent than ever. But absent any real political action against unchecked corporate power, Balaji’s story is more likely to be remembered as a right-wing meme than as a catalyst for reform.

Your support makes stories like this possible

From illegal war on Iran to an inhumane fuel blockade of Cuba, from AI weapons to crypto corruption, this is a time of staggering chaos, cruelty, and violence.

Unlike other publications that parrot the views of authoritarians, billionaires, and corporations, The Nation publishes stories that hold the powerful to account and center the communities too often denied a voice in the national media—stories like the one you’ve just read.

Each day, our journalism cuts through lies and distortions, contextualizes the developments reshaping politics around the globe, and advances progressive ideas that oxygenate our movements and instigate change in the halls of power.

This independent journalism is only possible with the support of our readers. If you want to see more urgent coverage like this, please donate to The Nation today.

More from The Nation

Why Gen Z Is Turning to Christian Influencers Why Gen Z Is Turning to Christian Influencers

Bryce Crawford, a tattooed Evangelical influencer, built a devoted young following out of algorithms, TikTok despair, and generational loneliness.

Steven Thrasher on Why We Must Think Past Skin-Deep Identity Politics Steven Thrasher on Why We Must Think Past Skin-Deep Identity Politics

The author of The Overseer Class discusses how people in marginalized groups can “mistake representation for liberation and confuse visibility with safety,” as Kwaneta Harris put ...

Chud the Builder and America’s Tradition of White Racial Terror Chud the Builder and America’s Tradition of White Racial Terror

We are not in unprecedented territory. We are returning to form.

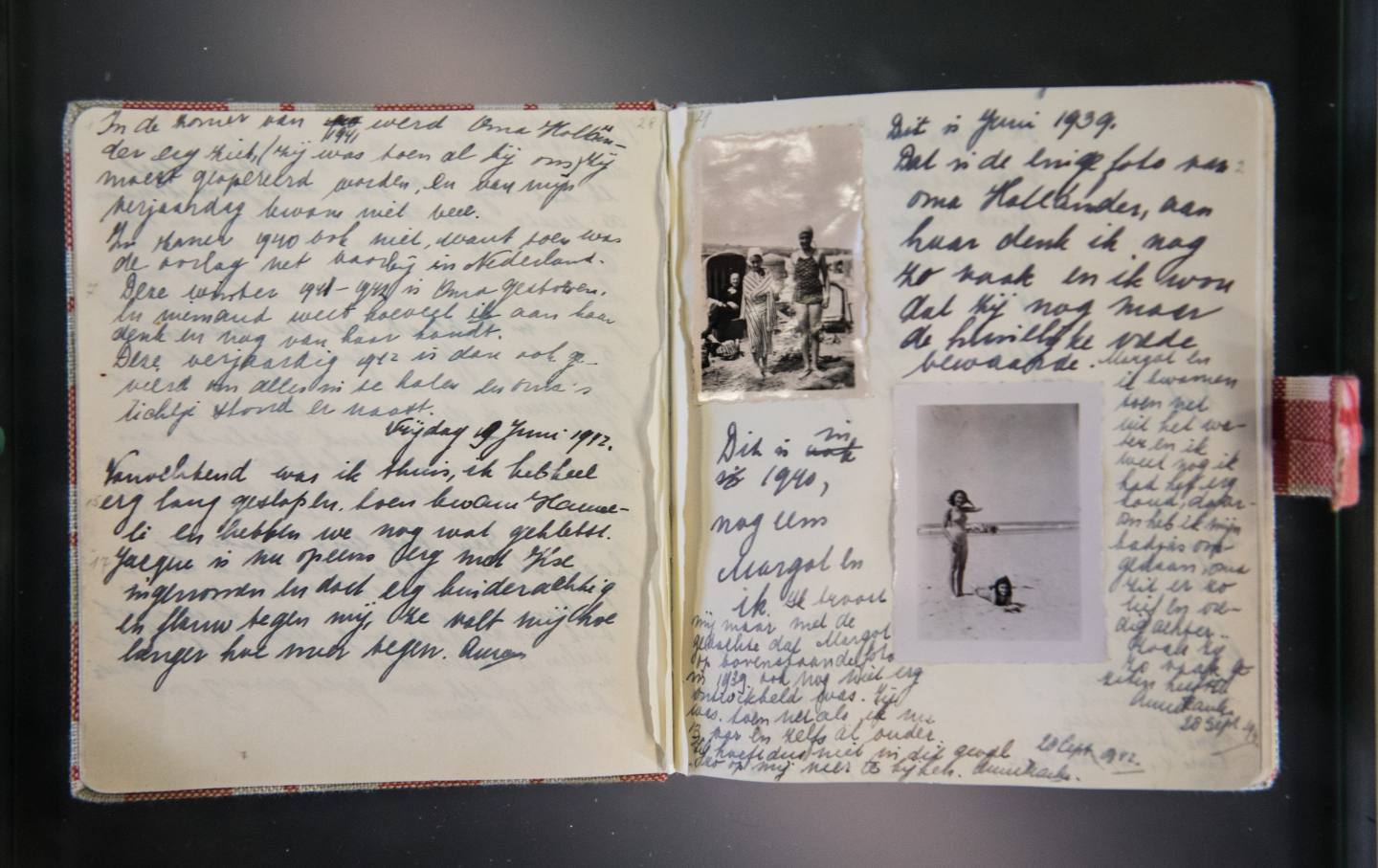

The Magical, Mysterious World of Archives The Magical, Mysterious World of Archives

Archives are where forgotten lives, hidden histories, and unfinished stories wait to be rediscovered.

The Enduring Legacy of Rudy Acuña The Enduring Legacy of Rudy Acuña

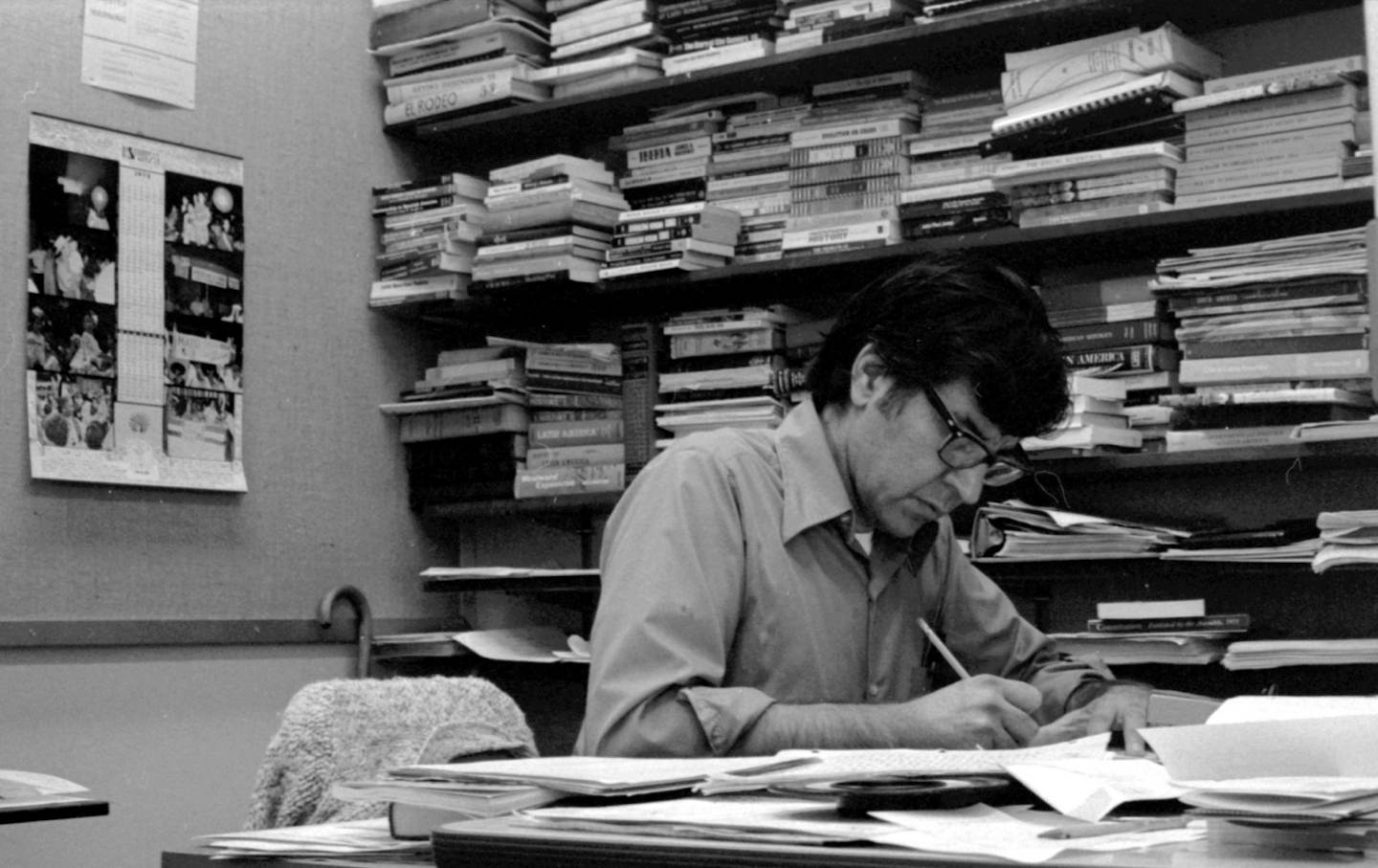

The pioneering Chicano studies scholar, who died in March, reshaped the writing of history.

Trapped in “el Pozo”: As Overcrowding in ICE Detention Increases, So Does Solitary Confinement Trapped in “el Pozo”: As Overcrowding in ICE Detention Increases, So Does Solitary Confinement

The spike in solitary confinement epitomizes the abuses of a migrant detention system that seems to be spinning out of control.