Garbage In, Carnage Out

The harrowing lessons of the Pentagon’s recently dissolved partnership with Anthropic.

Anthropic touts its alliance with the American imperium in happier times for the company.

(Photo illustration by Li Hongbo / VCG via Getty Images)It’s been a dizzying few weeks for the AI firm Anthropic. After a barrage of MAGA-led tantrums, the company lost its $200 million contract with the Pentagon by refusing to suspend key safeguards within its operating system that protect it from manipulation by bad actors; in terminating the deal, Secretary of Defense Pete Hegseth claimed that the AI lab posed a “supply chain risk to national security.”

But it appears that risk was short-lived, at least when it comes to a new intervention in the Middle East. As the Trump administration launched its invasion of Iran, the military reportedly relied on Anthropic’s AI technology to identify targets and coordinate bombing attacks. The whole episode speaks volumes about our failure to reckon with the true scale and implications of the AI sector’s growing dominance over all facets of American life—including the fateful life-and-death decisions entrusted to the country’s military-industrial complex. As the MAGA war complex and the Silicon Valley elite battle over the finer points of Anthropic’s role in modern war-making, the larger story remains unchanged: AI overseers are enthusiastic partners in a morally disastrous campaign to insulate the most destructive decisions that military commanders make from their actual consequences. And as usual, the casualties often marked for elimination in our emerging post-human war-making regime are powerless civilians on the ground.

None of this has entered into the high-profile spat between Anthropic and the Department of Defense. When news of the company’s breach with the Pentagon broke, AI boosters and tech analysts embarked on a fervid round of wishcasting, depicting Anthropic and company CEO Dario Amodei as swashbuckling defenders of responsible data collection against the forces of government surveillance and repression. “Dario Amodei lost his tender with the Pentagon but the Anthropic CEO held onto his beliefs and cemented his reputation as a man of courage,” Russian dissident and former chess grandmaster Garry Kasparov wrote on his Substack, having convinced himself that the contretemps was “a story bigger than Iran.” Meanwhile, Anthropic’s AI chatbot app, Claude, shot to the top of the charts on the App Store and Google Play.

It didn’t hurt Anthropic’s case that its opponents seemed to be doing their best impressions of monologuing cartoon villains. “The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War,” Trump thundered on Truth Social. Undersecretary of War for Research and Engineering Emil Michael declared on X that Amodei was a “liar” with a “God-complex” who “wants nothing more than to try to personally control the US Military and is ok putting our nation’s safety at risk.”

Yet the heavy-breathing partisans on both sides of the Pentagon-Anthropic spat have fundamentally misread the tech-military alliance they think they’re describing. Before we hand Amodei the Nobel Peace Prize that Trump so desperately covets, it’s worth remembering that Anthropic isn’t some innocent tech ingenue that’s been dragged into a slap-fight with Trump and Hegseth. The $380 billion company had been an enthusiastic, voluntary participant in Trump’s war machine, signing its Pentagon contract, eyes wide open, in July 2025, long after it was abundantly clear just what Trump 2.0 was all about.

The honeymoon went sour some time in January, when the administration decided, Darth Vader–style, that it needed to alter the deal it had agreed to. Pentagon officials removed wording from the Anthropic contract designed to ensure that Claude was not used for mass domestic surveillance or to guide fully autonomous weapons designed to kill without human oversight.

Anthropic said no to these demands, more than once. Hegseth, always in Fox News grievance mode, grew increasingly peeved at the company’s insolence—as well as at the predominance of Democrats in the company’s C-suites, some of whom occasionally said things about the Trump regime that it didn’t like. In a speech in January announcing the Pentagon’s new partnership with Elon Musk’s xAI, Hegseth muttered darkly about the evils of “equitable AI” with “DEI and social justice infusions…that won’t allow you to fight wars.” Insiders told Semafor’s Reed Albergotti that Hegseth was indeed referring to Anthropic and its refusal to grant the Pentagon carte blanche access to its tech.

Elon Musk had of course had no qualms of his own, as he rushed to cut his own AI deal with the military. In his Pentagon contract, Musk agreed to the use of X’s AI chatbot Grok “for all lawful purposes”—not exactly a reassuring standard given the administration’s rather cavalier attitude toward legality, very much including the unconstitutional invasion of Iran. It’s also not exactly reassuring to imagine the unreliable and ethically challenged chatbot that once called itself “MechaHitler” in charge of a fleet of fully autonomous killing machines.

The standoff between Anthropic and the administration came to a head last Friday, with Trump announcing in a typically unhinged message on Truth Social that “I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology,” by which he meant sometime over the next six months in the Pentagon’s case. “Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow,” he threatened.

Shortly afterward, Hegseth piled on with his declaration that the company was a “supply chain risk”—a designation typically reserved for companies run by autocratic enemy governments. The defense secretary went on to say his order would prohibit all companies doing business with the military from using Anthropic’s tech—a lurch into commercial he-man cancel culture that is almost certainly illegal. Why the government would demand for itself the unrestricted use of a tech that it thought was an immediate security risk is a topic I imagine will be discussed in some detail in court.

All the administration’s complaints about “woke AI” aside, the idea that Anthropic is a company run by a bunch of peaceniks that had somehow backed into the role of pulling down massive Pentagon contracts does considerable violence to the reality of the situation. Like other big-ticket defense vendors, Anthropic had actively sought its Pentagon contract, and had in fact already licensed its technology to Palantir, a surveillance tech company named after the “all-seeing” stone used by the evil wizard Saruman to keep track of his enemies in the Lord of the Rings. Palantir, founded by Silicon Valley anti-democracy troll and end-time enthusiast Peter Thiel, has become notorious for, among other things, its work with ICE, and for enabling the Israeli government to track and kill Palestinians in the Gaza genocide.

Even before Claude began mapping out the bombing attacks in Iran, the US Central Command had used it during the attack on Venezuela that kidnapped Maduro and dropped him in the Metropolitan Detention Center in Brooklyn. In the Iran attacks, Claude-Palantir software partnership has yielded civilian casualties already numbering in the high hundreds, including many of the students at the Shajareh Tayyebeh girls’ elementary school in the southern Iranian city of Minab. This isn’t by any stretch of the imagination a breach of Anthropic’s contract with the Pentagon; it’s precisely what all parties signed up for.

Indeed, last Thursday, a day before the final rupture, Amodei released a decidedly un-woke statement seemingly intended to remind the government that Anthropic was happy to be part of the Trump war machine. Making considerable use of the administration’s favored martial lingo (“Department of War,” “warfighters”), Amodei assured his readers that he “believe[s] “in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries.” To that end, he went on to explain,

Anthropic has…worked proactively to deploy our models to the Department of War and the intelligence community. We were the first frontier AI company to deploy our models in the US government’s classified networks…and the first to provide custom models for national security customers. Claude is extensively deployed across the Department of War and other national security agencies for mission-critical applications, such as intelligence analysis, modeling and simulation, operational planning, cyber operations, and more.

He made abundantly clear that in all but “a narrow set of cases” he was down with whatever the Pentagon had in mind for Claude. This included using the company’s tech for the mass surveillance of foreigners—but not American citizens. And, as he explained in detail, he was also fine with the use of Claude for “[p]artially autonomous weapons, like those used today in Ukraine,” which he said were “vital to the defense of democracy.”

And while Amodei noted, with some understatement, that current “frontier AI systems are simply not reliable enough to power fully autonomous weapons,” he asserted that there was every reason to believe that in the future “[e]ven fully autonomous weapons (those that take humans out of the loop entirely and automate selecting and engaging targets) may prove critical for our national defense.” In other words, damn the torpedoes, and bring on the killbots!

Popular

“swipe left below to view more authors”Swipe →The appeal of using artificial intelligence to make decisions or recommendations on the battlefield is not only due to its incredible efficiency. It’s also that AI offers a certain moral buffer to those using it. The technology creates the illusion of an arm’s distance gap between Pentagon war planners and the consequences of their divisions; with a chatbot whispering in their ears, they’re able to pretend that they’re not truly responsible for any innocents they might recklessly kill, because they were only following the expert advice of the machine. That’s a valuable alibi—even when the bombing engineers, like the rest of us, know that the AI we have today is given to lapsing into strange hallucinations and errors.

Ironically, by insisting that the Pentagon keep humans “in the loop” when selecting which people to kill, Anthropic is insisting on a different sort of moral buffer for itself. The logic is simple: You can’t blame Claude—or, more to the point, its makers—for killing innocents if a human being ultimately has to pull the proverbial trigger on Claude’s “suggestions.” In practice, of course, people tend to defer to the supposed expertise of the machine—especially amid the fog of war, when they may only have mere moments to make their life-or-death decisions. A devastating 2024 investigative report on the Israeli government’s use of its own bespoke AI in Gaza by +972 Magazine and Local Call drove the point home: “One source stated that human personnel often served only as a ‘rubber stamp’ for the machine’s decisions,” adding that, normally, they would personally devote only about ‘20 seconds’ to each target before authorizing a bombing—just to make sure the [AI]-marked target is male.” (And thus, presumably, more likely to be Hamas.) In other words, the moral buffers coveted by Pentagon war planners represent but a 20-second rubber stamp in the orchestration of mass death on the ground. In Gaza, this demented death-optimization logic has produced 75,000 fatalities; in Iran, the body count is just beginning. Or to put this all in terms Silicon Valley is more apt to understand: garbage in, carnage out.

More from The Nation

The Honeymoon Is Over Between Trump and Europe’s Far Right The Honeymoon Is Over Between Trump and Europe’s Far Right

Viewing an alliance with Trumpist America as a liability.

The Iranian Diaspora Is Fracturing Over Trump’s War The Iranian Diaspora Is Fracturing Over Trump’s War

Friendships have ruptured. Families have bitterly split around the kitchen table. Violent speech has become the dominant mode of discourse.

Only One Side Has Clearly Broken the Law In the Strait of Hormuz Only One Side Has Clearly Broken the Law In the Strait of Hormuz

And it isn’t Iran.

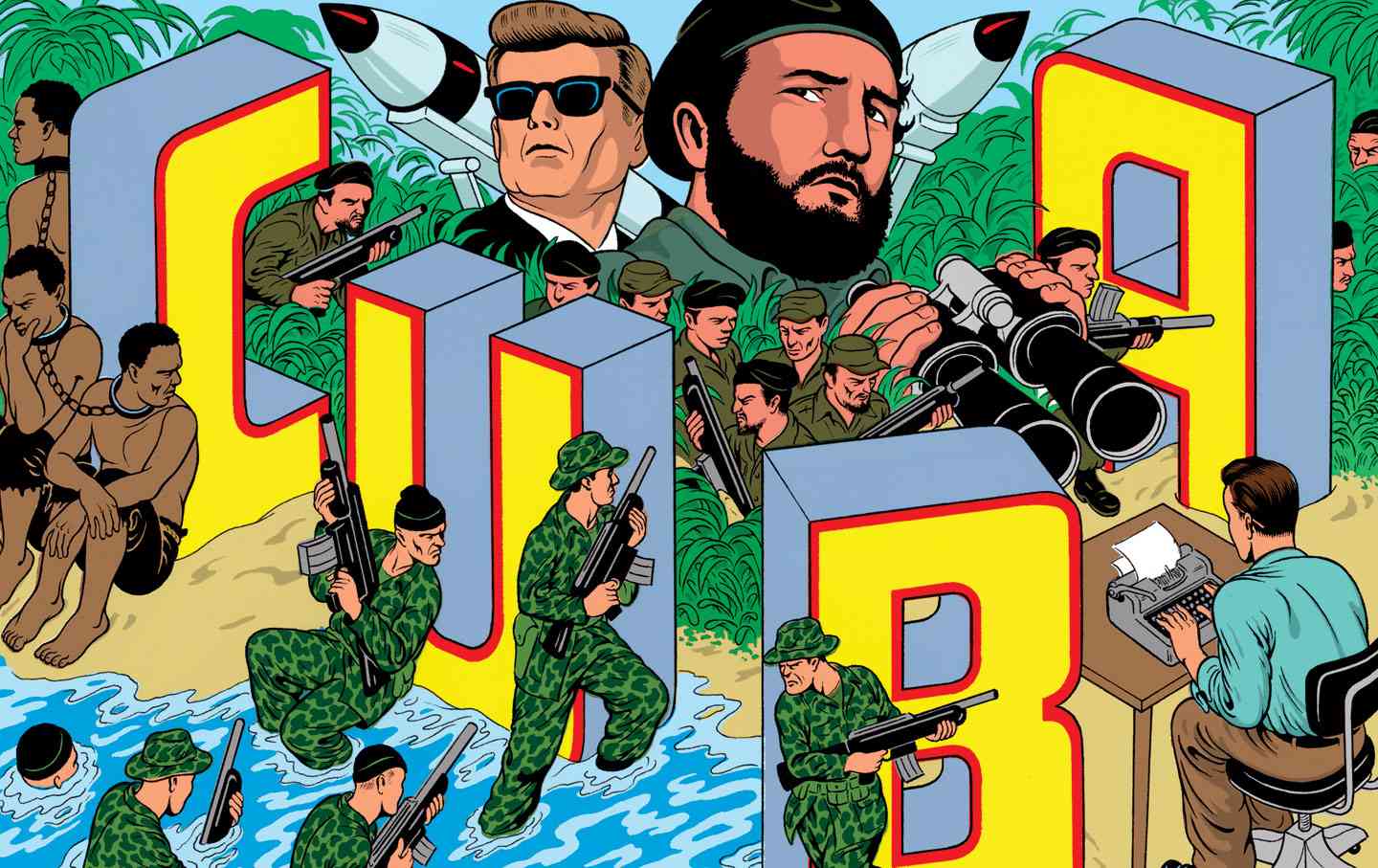

Lessons of the Bay of Pigs Lessons of the Bay of Pigs

The infamous paramilitary assault remains a cautionary Cold War history.

The Blockheaded Thinking Behind Trump’s Plan for a Hormuz Blockade The Blockheaded Thinking Behind Trump’s Plan for a Hormuz Blockade

The president’s latest proposal to force Iran to negotiate an end to his feckless war somehow makes less sense than all the other ones.

In Lebanon, Grief Is Everywhere. But So Is Our Defiance. In Lebanon, Grief Is Everywhere. But So Is Our Defiance.

We are reeling from Israel’s massacres. But people are tirelessly organizing on the ground, and are not giving up.