AI for the People

A manifesto for an AI revolution that works for the many, not just the billionaires.

The AI revolution is destined to transform human society in ways that most of us cannot begin to fathom. The changes to come will be every bit as daunting as what the world saw in the industrial and digital revolutions. Yet our policymakers are ill-prepared—and, in the case of our president, dramatically unwilling—to ensure that these changes benefit everyone rather than a tiny cabal of hyper-wealthy tech oligarchs.

To meet this challenge, we must develop a new social contract that begins with the basic premise that artificial intelligence must serve humanity, not the bottom line of a billionaire class that seeks to become a trillionaire class at our expense. We cannot allow technological overlords to build a society where AI “progress” is defined by their wealth rather than by our democracy.

I make this argument as a member of Congress who represents Silicon Valley, the home of companies with more than $18 trillion in market capitalization—more than one-quarter of the entire US stock market—and five that are worth more than $1 trillion each. I know tech billionaires, I know the people who are benefiting from the AI revolution’s massive upward redistribution of wealth, and I know that more than a few of them believe they have a divine right to lead and rule. But that cannot be our future.

We need to tax extreme wealth in order to meet human needs, which is why I support the proposed onetime 5 percent wealth tax on California billionaires (while not taxing voting shares or illiquid gains) and have proposed federal legislation to raise $4.7 trillion in revenue by taxing billionaires and another $2 trillion by making corporations pay their fair share. I have challenged my fellow members of Congress to support this legislation with the argument that if the representative from Silicon Valley can stand up for billionaire taxes, it shouldn’t be that hard for other House members and senators to do the same.

Just as important, I know—as a former deputy secretary in the Obama administration’s Commerce Department who has spent the past decade focusing on the economic and social disruptions caused by AI—that politicians, unions, civil-rights groups, faith communities, and grassroots activists must act urgently and aggressively to create laws, regulations, and incentives that prioritize humans over machines, protect the mental health of our children from social-media slop, stop algorithmic rent increases and predatory pricing, and prevent American jobs from being sacrificed in order to enrich oligarchs.

AI is evolving so rapidly that even its intellectual pioneers are unsettled. Geoffrey Hinton, the Nobel laureate in physics who’s known as the “godfather of AI,” quit his position at Google several years ago and warned that AI-generated programs could overwhelm the public discourse with misinformation and, ultimately, pose an existential threat to humanity. Stuart Russell, the British computer scientist who literally cowrote a textbook on AI, now worries that AI development is “intrinsically unsafe.”

Some of the people behind the most sophisticated AI technologies are also scared. After the Department of Defense asked to use Anthropic’s Claude chatbot for domestic mass surveillance and autonomous warfare, the company’s CEO, Dario Amodei, said that he will not allow the technology to be used for either purpose. But what about all the other AI companies and tech leaders lining up for defense contracts and letting their products be used to kill people—as has already happened in Gaza?

Clearly, we all must start asking some fundamental questions about AI, as Senator Bernie Sanders (I-VT) did when we held our “Who Controls the Future of AI: The Oligarchs or the People?” town hall at Stanford University in February. “If AI is going to replace a lot of the work that human beings do, what becomes of human beings?” the senator said. “Are we superfluous in the process? What happens to our ability to relate to each other?”

We also have to acknowledge, in the words of Sanders—who, after 35 years in the US House and Senate, knows Capitol Hill better than anyone—that “Congress and the American people are very unprepared for the tsunami that is coming.”

Wrestling with these questions, and preparing for the tsunami, is far too important to be left in a few private hands. Unfortunately, Donald Trump and too many Republicans in Congress don’t see it that way. They want to hand the tech-industry elites a blank check to develop AI in ways that give them more wealth, more power, and more control over our future. In December, after Congress rejected the administration’s repeated attempts to slip anti-regulation language into federal legislation, Trump issued an executive order that authorizes US Attorney General Pam Bondi and the Department of Justice to sue states, overturn AI safety regulations, and put consumer-protection laws at risk. If states succeed in keeping their laws on the books, Trump has ordered federal regulators to withhold federal funds that have been allocated for building out broadband infrastructure.

State attorneys general will defend state-level regulations, and they’ll win their share of court battles. But merely saying no to Trump’s executive overreach is insufficient. Democrats must provide an alternative vision that connects with independents and responsible Republicans by speaking to the practical concerns that the American people have about AI.

So how do we answer those questions? How do we prepare for—and hopefully avert—the tsunami that Sanders referred to? I believe that we have more of the answers than commentators imagine—and that we can find additional answers by making AI debates central to our politics.

We must frame the progressive alternative to Trump’s dangerously naïve and irresponsible “blank check” agenda. To that end, both at Stanford with Senator Sanders and in conversations and meetings with academics, union leaders, and grassroots activists across the country, I’ve been making the argument for a new social contract to address the defining issues of our time: inequality and AI.

Let’s begin by acknowledging that we live in a new Gilded Age. Tech billionaires, who believe that in a different era they would have been heroic conquerors, are wresting control of our economy, our media, and our politics from the American people. And despite the growing popular concerns over AI, they are tightening their grip on the control of our future.

Most Americans feel they have little say in shaping that future for themselves, let alone for their kids. This has contributed to anger, resentment, and a hopeless cynicism about these issues. In a January Economist/YouGov poll, more than half of the Americans surveyed said that the gap between rich and poor in America was “a very big problem” (while only 6 percent said it wasn’t a concern). An April 2025 Pew survey found that, by a nearly two-to-one margin, people expect AI to harm rather than benefit them. Why would they think that? Perhaps because they’ve seen the headlines generated by Amodei’s prediction that half of entry-level white-collar jobs could be eliminated by AI in five years.

No nation can survive like this—with islands of prosperity amid seas of despair.

The economist Gabriel Zucman has shown that today’s concentration of wealth is the highest it has been since the 1920s. About 19 billionaires have amassed $3.3 trillion—the equivalent of 10 percent of all the goods and services that are produced in the US in a year. This is nearly three times more than the wealthiest Americans were worth relative to the size of the economy at the peak of the Gilded Age. Extreme wealth forms an unholy alliance with power, leading to two tiers of justice and stripping ordinary citizens of an equal voice in our democratic experiment.

Stanford University, where I once taught economics, is the epicenter of this wealth concentration and, not coincidentally, AI innovation. The 15-mile radius around the campus is home to Apple, Google, Nvidia, Broadcom, and Meta. One-third of the S&P 500’s value originates in this place.

This is one of the reasons why, when Senator Sanders and I appeared at Stanford, I reminded the students and faculty, “We see the future from here. We know what’s coming in a way that many politicians and DC bureaucrats simply can’t see. What kind of future are we going to build? Will this future be only for the tech lords or for all of us?”

The new tech social contract that I propose begins with an understanding that to whom much is given, at least a little is expected in return.

None of this makes us anti-technology, let alone anti-innovation.

We can acknowledge that tech entrepreneurs have taken risks and shown skill and imagination in scaling and adopting AI technology. But, as with every successful generation of American entrepreneurs over the past two centuries, their progress stands on a foundation of public investment. For instance, taxpayer dollars, as well as philanthropic donations, funded the development of AI at Stanford, where ImageNet and the Digital Library Project helped give birth to Google.

That is why we must ask not what America can do for Silicon Valley, but what Silicon Valley must do for America.

The AI revolution could help cure cancer and rare diseases, slash housing costs, make it easier to start businesses and open factories, address our energy needs, and lower medical and educational costs for the working class.

But if we leave it in the hands of a few billionaires, their priority will be to eliminate jobs, extract profits, and addict us to outrageous content that turns us from citizens into combatants.

That’s not the future I want. I am not an AI accelerationist. But nor am I an AI doomer.

I am an AI democratist.

The future must not be written by AI agents that serve only San Francisco billionaires. It must be written by all of us, together, in a way that heals our national divides; spreads prosperity through every community in this country, from rural towns to big cities; allows the middle class to grow and thrive; and keeps the oligarchs from dominating our society.

To that end, I have laid out seven principles for what a democratic AI should look like.

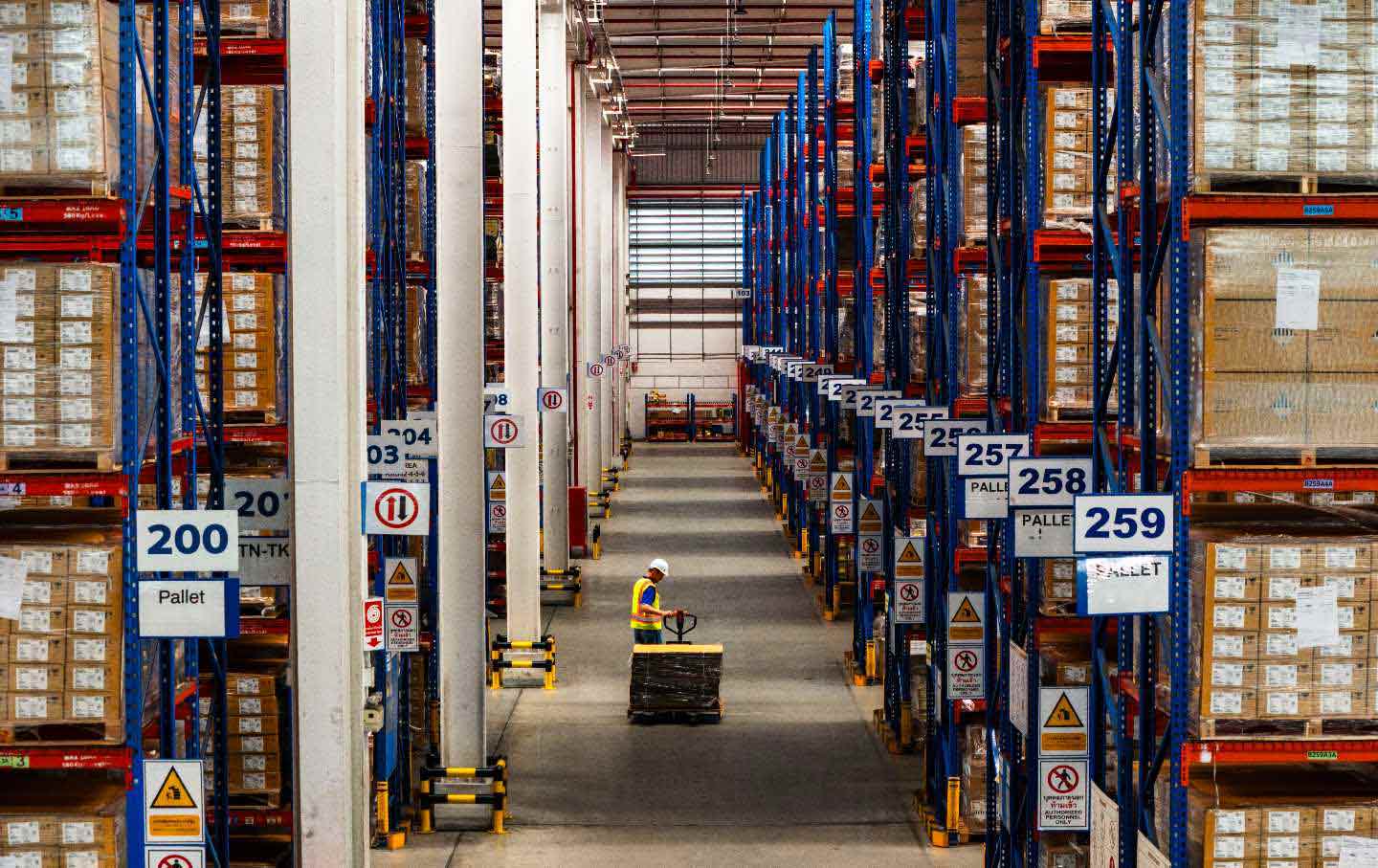

First, we must keep humans in the loop. We need real protections against mass job displacement, beginning with the 3.6 million truck drivers who face the loss of their livelihoods as autonomous vehicles hit the road. Even as self-driving trucks improve safety and efficiency, human drivers must remain in charge, just as pilots must still fly our planes. This will allow us to develop AI that augments human capability instead of eliminating jobs.

Second, every large company must bargain with its workers. Unions and elected representatives should ensure that displaced workers move into new value-creating roles and can benefit from AI’s productivity gains through higher wages, profit sharing, and shorter workweeks.

Third, we have to fix the tax code’s anti-human bias. Robots get accelerated tax depreciation, but hiring humans comes with payroll taxes. The economist Daron Acemoglu estimates that companies typically pay 5 percent or less in taxes on digital tools, while paying as much as 30 percent in taxes when hiring humans. This makes no sense. We must make it easier to hire workers, not robots.

We also need to create an annual data dividend so that every American gets a check from the data they generate, both for private businesses and for government activities like public health, traffic management, and policy research.

Fourth, we must launch a Future Workforce Administration. Just as President Franklin D. Roosevelt did during the Great Depression, we must seize this moment of anxiety among white-collar and blue-collar families alike and answer it with the boldest, most patriotic jobs agenda in generations.

Funded by a modest wealth tax on the trillions created here and by a token tax on AI used by businesses that displace labor, this program will put Americans to work in public service. The initiative will drive moon-shot projects that expand the frontiers of science, clean energy, and biotech. It will also mobilize young people to rebuild towns, teach our children, provide childcare and eldercare, and strengthen small businesses in every community.

And we will launch 1,000 new trade schools and tech institutes—so the next generations are prepared for careers that AI can’t replace.

Fifth, data centers must serve the communities that power them. Right now, the wealth generated from data centers flows directly to mega-corporations without benefiting working people. That must end.

Tech companies need to invest deeply in the areas providing them with such riches, rather than merely lining their pockets. They must provide computer resources for schools and libraries, create local tech jobs and fund startups, and use renewable energy and dry-cooling technology to lessen the enormous toll that data centers exact on the environment and the water supply. We should look to what Singapore has done with its data centers and invest in massively increasing the supply of clean energy. Most importantly, tech companies must pay their full electricity bills instead of shifting those costs onto our communities.

Sixth, we must prevent AI from weaponizing public discourse. We must unite across party lines to stop engagement-driven algorithms from spreading hate. We should eliminate Section 230 of the Communications Decency Act of 1996 so that we can regulate amplified violent content. And we should require platforms to open up so Americans can connect freely across them.

Seventh, we must regulate AI so it is used to improve humanity, not damage it. We need clear, enforceable guardrails with mandatory third-party verification of advanced AI models to ensure that this powerful technology does not cause serious societal harm. This needs to be more than the voluntary collaboration taking place at the federal Center for AI Standards and Innovation. We need a robust federal agency to regulate AI like we do with nuclear energy or aviation.

Along with fair taxation of corporations and billionaires, these principles provide a framework to help ensure that AI does not usher in a level of wealth and power concentration that further rips apart our democracy. If we continue with the status quo or adopt poll-tested incrementalism, we will leave ordinary Americans out in the cold, and prosperity will be only for the privileged. I will not sit by and watch that happen. We need a program with the boldness and scale of FDR’s New Deal, a democratic project for our time. The point is not to slow innovation, but to see that its benefits reach every American.

This is a program that says by its very substance: There will be no surrender to the tech lords. None.

What there will be is a claiming of AI, and the future, for the American people.

More from The Nation

Letters From the May 2026 Issue Letters From the May 2026 Issue

Voting for vets… The meaning of evangelical… Billionaire ball clubs…

Harry Haywood and the Radical Politics of Black Communism Harry Haywood and the Radical Politics of Black Communism

For Haywood, a truly radical working-class politics in the United States also required a program of self-determination.

America’s True Fascist Architectural Legacy America’s True Fascist Architectural Legacy

It’s not the kitschy White House ballroom—it’s logistics warehouses converted to ICE detention centers.

Patrisse Cullors: Art Is Liberation Patrisse Cullors: Art Is Liberation

Black Lives Matter cofounder Patrisse Cullors says cultural work will be the key to shifting the system and imagining a world after MAGA.

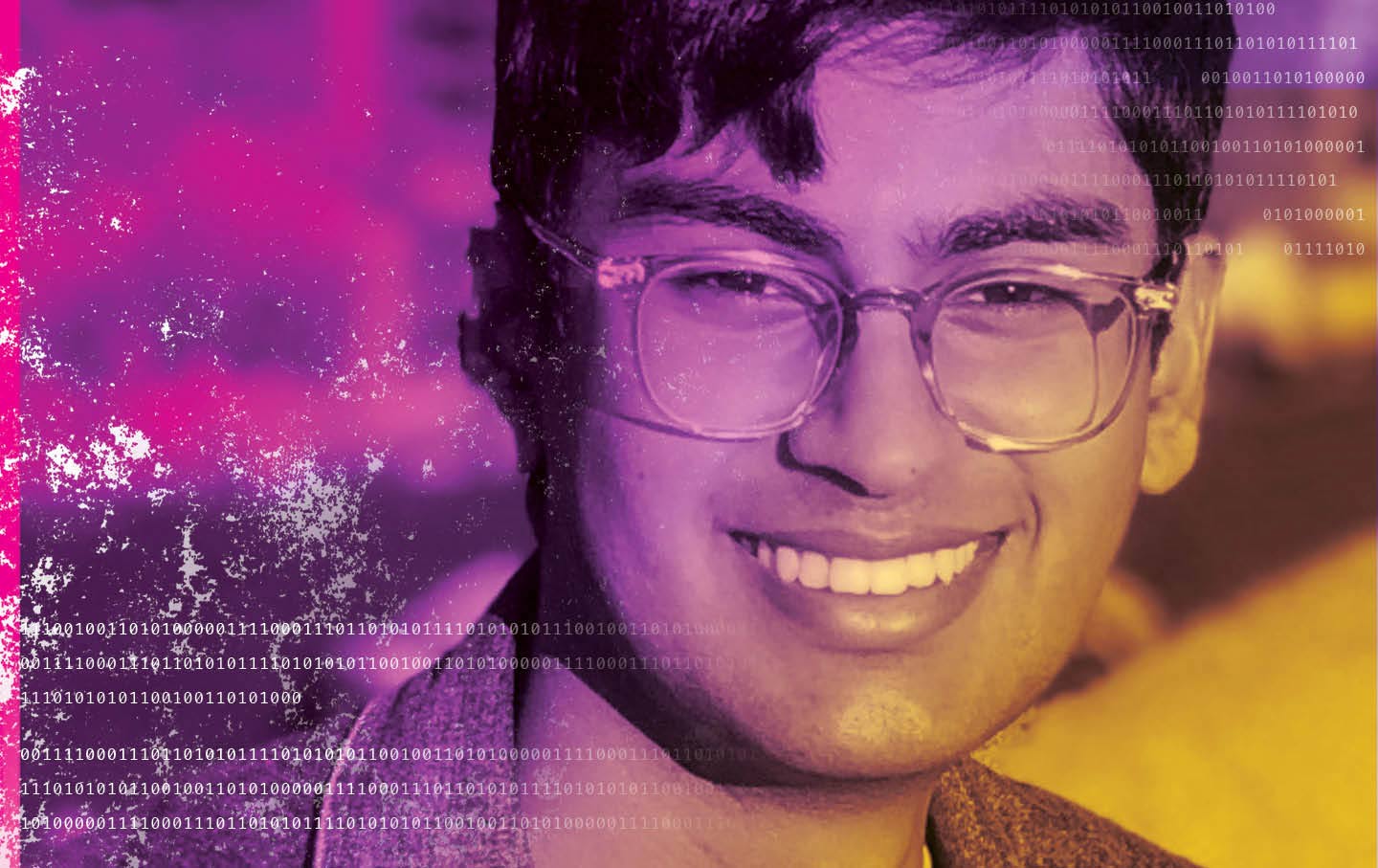

The Death of an AI Whistleblower The Death of an AI Whistleblower

Suchir Balaji sought to expose OpenAI’s data abuses. Did it come at the expense of his own life?

Why Fascists Fear Free Speech Why Fascists Fear Free Speech

The White House is following an old authoritarian playbook to suppress dissent.