These AI-Powered Surveillance Cameras Are Everywhere—and People Have Had Enough

The nationwide fight against the suddenly ubiquitous Flock camera.

Screenshots of news stories about Flock cameras from around the country.

(KVAL; Tampa Bay 28; News 8000; Fox 26)The panopticon was, at first, just a thought experiment dreamed up by philosopher Jeremy Bentham in the 18th century: a prison designed so that any given captive could be watched at any moment, without ever knowing whether they were being watched. Now the panopticon is very real, and it is everywhere.

The surveillance infrastructure being built right now is one of the defining stories of the 21st century. Twenty years from now, people will point to this era as one when the relationship between the state and the individual was permanently renegotiated.

Bentham’s panopticon had a watchtower at its heart, but ours has no such single, static center. Instead, it has endless, constantly roving eyes: a Ring doorbell, feeding footage to police through a portal that most people who bought it to watch for porch pirates didn’t know existed; Clearview AI, which scraped 3 billion faces from the Internet and built a facial recognition database that hundreds of law enforcement agencies now use to identify people; your phone’s location data, legally purchased from brokers by federal agencies that couldn’t collect it directly without a warrant; fusion centers, where federal, state, and local agencies pool surveillance data from dozens of sources with basically no oversight and share it across jurisdictions with few restrictions; drones, increasingly deployed at protests and dispatched autonomously to 911 calls by the same companies that sell the cameras on the ground; and finally, nearly 90,000 AI-powered cameras, installed by a private Atlanta-based company called Flock Safety, photographing every car that passes and feeding the data to a network that thousands of police departments or Border Patrol can query, without a warrant, without telling anyone, for any reason an officer decides to type into a search box.

We have been pushed to accept all of this without question—to think of the panopticon as a natural, unchangeable part of life, like oxygen. And, in large part, these efforts have been successful. But not always.

Take those Flock Safety cameras. They capture billions of images of cars every month, and they have gone up in cities across the country, mostly with little public input. But in more than 30 cities, since just the start of 2025, that has started to change: The contracts ended, and the cameras came down, or, in multiple cities, municipal workers went out with garbage bags and covered the lenses one by one.

I keep coming back to that imagery—the surveillance state, outwitted by a humble garbage bag. It’s unglamorous and maybe a little absurd, but also, in a dark political moment when so much feels immovable, kind of galvanizing. The logic of surveillance infrastructure depends on a particular feeling: that the system is too vast and entrenched to fight. But the organizers who map the cameras, the activists who built tools to let people find out whether their own plates have been searched, the residents filing public records requests in cities where the contracts were never meant to see daylight—all of them are, in their different ways, dismantling that feeling. What they’re up against, though, is larger than any single entity. Canceling a municipal Flock contract removes the police department from the network, but not the big-box stores, the universities, or the private neighborhood associations still feeding data into the same pool. The long-term battle, then, is not just to break the grip these companies have on our governments. It’s to break the grip they have on the world.

What surveillance companies like Flock purport to sell is safety; last year Flock CEO, Garrett Langley prognosticated that his cameras would rid the country of “almost all crime” within a decade. But what Flock really offers is something larger. Police get cameras, but they also get access to a shared search network fed by every other Flock customer—meaning a small police department in rural Texas can query the movement history of a vehicle across thousands of camera networks nationwide. And although Flock’s private customers (HOAs, big-box stores, universities) don’t get access to the entire network, they contribute to it: Their cameras feed data into the larger pool that police can query without the camera owner’s necessarily knowing who is searching, or why. A Target in a strip mall doesn’t get to run searches across immigration databases, but it does add footage to a network that does. The data flows in one direction—from private cameras outward to state agencies, federal partners, and fusion centers—usually in ways that neither the private customers nor the local governments who signed police contracts fully understand until after the fact. Illinois discovered this when an audit by the secretary of state found that Flock had allowed US Customs and Border Protection to access state data in violation of an Illinois law designed to prevent exactly that. (Flock called the sharing “inadvertent.”)

There are no meaningful restrictions on what Flock cameras can be used for, and police don’t need a warrant to search the network. Appellate and federal courts in at least 14 states have upheld the use of automated license-plate reader evidence without a warrant, reasoning that license plates are publicly visible and therefore carry no reasonable expectation of privacy. Almost every major revelation about how Flock’s network actually operates came not from voluntary disclosure by Flock or by police but from journalists and organizers—this while statehouses across the country are gutting open-records laws and in some cases exempting surveillance contracts from disclosure requirements entirely.

The only limit on how the network gets used, in practice, is what law enforcement decides it wants to do, which is another way of saying there is no limit at all. An Electronic Frontier Foundation analysis of search logs found that more than 50 agencies, including Border Patrol, ran hundreds of searches through Flock’s network specifically tied to protest activity last year. A Texas officer used the network to track a woman who had obtained an abortion. Border Patrol had direct access to Flock’s nationwide network during a trial period in 2025; when that trial ended, local agencies continued running searches on the federal government’s behalf, or simply lent their log-on credentials. The administration has started using autonomous surveillance towers, once only seen at the southern border, in interior cities. The government has also used facial recognition in its operations; in at least one documented case in Minneapolis, an agent addressed a bystander by name, apparently in real time, from some system the agency declined to specify. The line between border surveillance and domestic surveillance has been functionally erased.

None of this is new, exactly. Police have always surveilled dissenters, always overpoliced Black and brown neighborhoods, always found ways to abuse their power with the tools at their disposal. What’s new is the infrastructure: the speed and automation of what previously required manpower and paper and a detective willing to put in the hours. The protest searches and the abortion tracking are simply the system working as designed. Which is also, it turns out, why people are fighting back.

Communities all over the United States are ending their contracts with Flock. Flagstaff, Arizona, voted unanimously to terminate in December after an hour and a half of public comment. Cambridge, Massachusetts, ended its contract in December after Flock reinstalled cameras without the city’s knowledge following a council-ordered pause—what the city called a “material breach of trust.” In Evanston, Illinois, a nearly identical sequence of events ended with the city eventually issuing Flock a cease-and-desist order. In Saranac Lake, New York, residents raised privacy concerns so forcefully at a village board meeting that a plan to install the cameras was scrapped before it started, and the Republican mayor who had pushed for them lost reelection a few weeks later. In Central Texas, Austin, Hays County, and San Marcos all canceled within months of each other. In Santa Cruz, California, the city voted 6–1 to terminate after learning that state agencies had illegally accessed its camera data roughly 4,000 times on behalf of federal law enforcement between June and October 2025 alone. In Woodburn, Oregon, a predominantly Latino city of 28,000, the cameras were powered off after an audit found that DHS and Border Patrol had accessed local data 384 times in a matter of months, despite the city’s having been told that only Oregon law enforcement could query the network. Springfield, Oregon, ended its agreement with Flock after the neighboring city of Eugene terminated its own contract following months of organizing by a group called Eyes Off Eugene and growing concerns about system vulnerabilities and data security. In Oak Park, Illinois, organizers documented that Black drivers were being flagged at a rate of 85 percent in a city where Black residents make up 19 percent of the population, and brought that analysis to their elected officials. The contract ended. In Mountain View, California, residents demanded contract termination, physical camera removal, and a full refund of the $154,000 already spent. The council voted unanimously to do all three. Each outcome is a precedent, each precedent a tool the next city can use.

The organizing has also produced an impressive ecosystem of counter-tools. DeFlock.me has mapped more than 76,000 cameras by crowdsourcing their locations. HaveIBeenFlocked.com, built by an Iowa activist after police departments accidentally released unredacted audit logs in response to public records requests, lets anyone enter their own plate number and find out whether they’ve been surveilled. Then there are the people who have simply gone out and physically disabled cameras—with paint, with garbage bags, with paint-soaked sponges attached to drones—which is not necessarily a scalable strategy but is, in its way, a completely coherent one. The surveillance state was built by people, and can be unbuilt by people. Sometimes that looks like a sponge mounted to a drone, and sometimes it looks like the humble open-records request.

The people involved in these campaigns are not all hardened civil libertarians. They are also parents and teachers and scientists and retirees who noticed something had changed in their communities without anyone asking them. The Rural Privacy Coalition, based in Skamania County, Washington, has been organizing against Flock in rural communities and shares materials at RuralPrivacy.org for other small counties trying to do the same.

But organizers know, even as they celebrate their victories, that they are only scratching the surface.

Take Ithaca, New York, where residents organized under the name Flock Off. On March 4, the Ithaca Common Council voted unanimously to end the city’s Flock contract, with protesters dressed as cameras outside city hall. “Communities are recognizing the threat that these vehicular surveillance systems pose,” said one organizer. “And that no is contagious.”

Beneath the city contract, though, like Russian dolls, were individual contracts between Flock and big box stores, Cornell University, Ithaca College, private neighborhood associations—agreements the city had no power to terminate, feeding data into the same network the city had just voted to leave. Canceling the municipal contract removes the police department, but it doesn’t remove the city.

Denver illustrates a different version of the same problem: The city council voted unanimously against renewing the Flock contract, so Mayor Mike Johnston’s administration simply slashed the contract value to just under $500,000—a single dollar below the threshold that would have required council approval—and extended it anyway. (When Flock’s contract finally expired on March 31, 2026, all 110 city cameras came down. But the council then approved a replacement contract with a different company, and one council member noted that hundreds of private Flock cameras remain operating throughout the city, outside the city contract entirely.)

Popular

“swipe left below to view more authors”Swipe →And Flock is just one node in a vast surveillance infrastructure. Ring doorbells have fed footage into law enforcement networks nationwide; Flock and Ring announced a partnership last year that collapsed only after a Super Bowl ad prompted a swift public backlash. Clearview AI has scraped billions of faces from the Internet into a facial recognition database now used by ICE, the Army, and hundreds of law enforcement agencies, and, unlike Flock, it has faced almost no organized municipal resistance; Clearview has simply ignored the fines, worked around the bans, and just signed a $9.2 million contract with ICE.

The lesson may be that the organizing model that’s working against Flock—showing up, filing records requests, forcing a public vote—works precisely because Flock requires local consent. Clearview doesn’t ask permission from anyone. Axon—which started with Tasers and body cameras and now describes itself as a “public safety technology company”—is turning those body cameras into AI-powered surveillance tools that analyze footage in real time. It has also introduced an AI tool that automatically drafts police reports from body camera footage, meaning the same company can now surveil a person, analyze the footage, and generate the official legal document describing what happened, with a human officer doing little more than hitting “approve.” It’s a closed loop: AI watching, AI summarizing, AI producing the record that follows someone through the criminal legal system, with no point at which a person is required to actually see another person and make a judgment.

All of this to say: Flock is not the disease. It’s just a particularly visible symptom, in part because it has been so sloppy. The goal of the people fighting it, for those paying close attention, is not a better-regulated version of the same thing. It’s less surveillance, period: less of a system whose function is not to keep communities safe but to keep them legible, controllable, and available for enforcement. That is a harder argument to win at a city council meeting than “this vendor can’t be trusted,” which is why the cancellation campaigns tend to make the narrower case. But the narrower case, won many many times over, is also building the foundation for the larger one.

There’s still a version of this story that ends badly: The surveillance infrastructure becomes too entrenched to fight; the open-records laws get gutted before the next generation of tools can be exposed; and the panopticon becomes, for practical purposes, permanent. But the same political moment that has made this infrastructure more visible—the ICE sweeps, surveilling and punishing protest, the use of facial recognition on bystanders—has also made it impossible to ignore, and impossible to ignore turns out to be a helpful precondition for fighting back.

This isn’t how power is supposed to work in the era of the carceral state, where the scale of the surveillance apparatus, the resources of the corporations that build it, and the political will of the administration deploying it are all designed to feel inevitable, totally beyond the reach of community organizing. The point of that feeling is to produce defeated inaction. If you can’t imagine winning, you don’t really feel like showing up. But people are showing up. And they are winning—imperfectly, incompletely, one garbage bag at a time.

Your support makes stories like this possible

From illegal war on Iran to an inhumane fuel blockade of Cuba, from AI weapons to crypto corruption, this is a time of staggering chaos, cruelty, and violence.

Unlike other publications that parrot the views of authoritarians, billionaires, and corporations, The Nation publishes stories that hold the powerful to account and center the communities too often denied a voice in the national media—stories like the one you’ve just read.

Each day, our journalism cuts through lies and distortions, contextualizes the developments reshaping politics around the globe, and advances progressive ideas that oxygenate our movements and instigate change in the halls of power.

This independent journalism is only possible with the support of our readers. If you want to see more urgent coverage like this, please donate to The Nation today.

More from The Nation

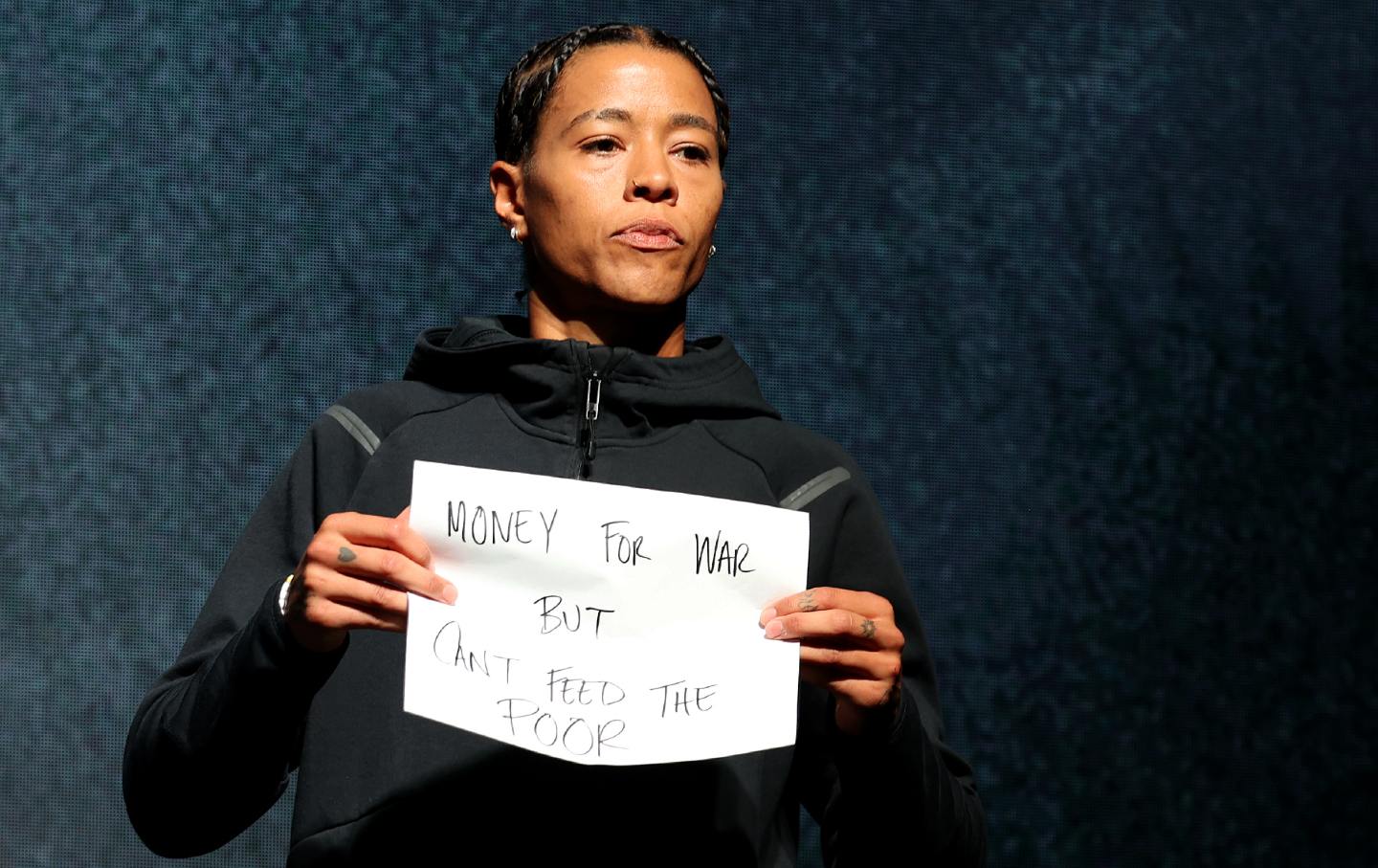

The Risk in Being More Than an Athlete The Risk in Being More Than an Athlete

Natasha Cloud became one of only a few professional athletes to speak about Gaza. Now she can’t find a WNBA team.

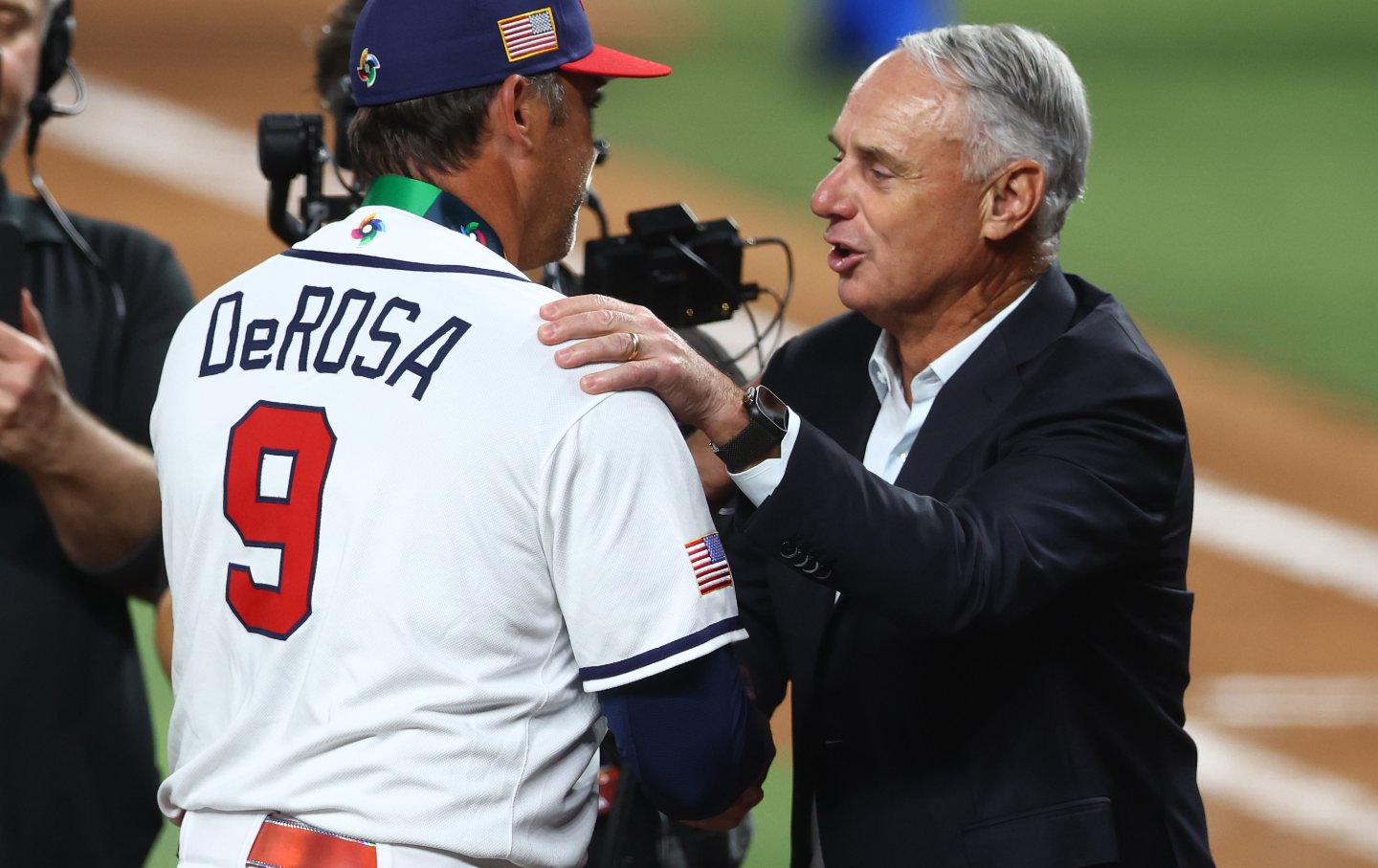

Will Baseball’s Billionaire Owners Go on Strike? Will Baseball’s Billionaire Owners Go on Strike?

A looming lockout could test whether baseball players can hold the line against billionaire team owners.

As AI Breathes Down Our Necks, It’s Time for a Luddite Renaissance As AI Breathes Down Our Necks, It’s Time for a Luddite Renaissance

Nineteenth-century textile workers longed to stay human in a machine age. So do we.

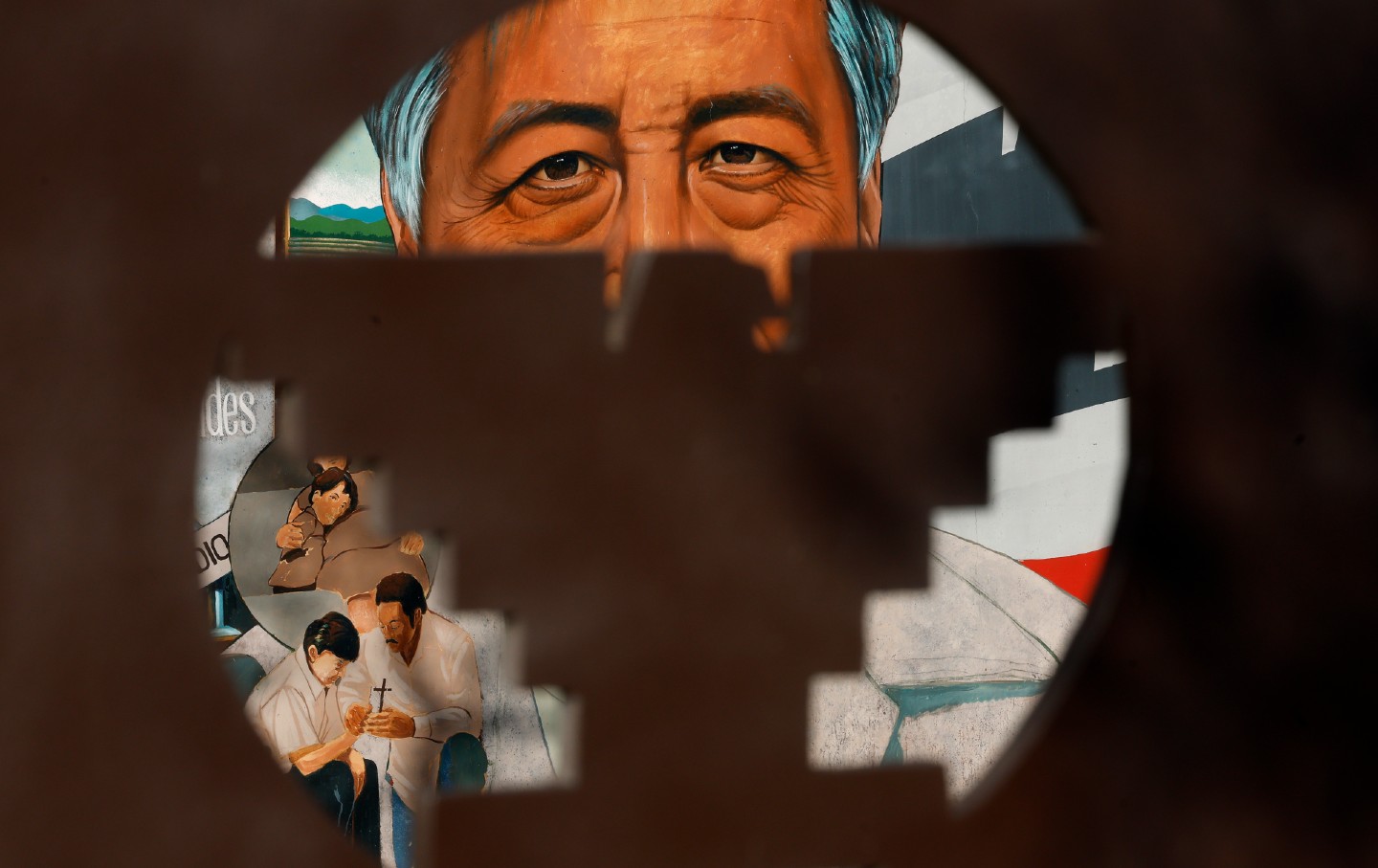

The Cost of Making Cesar Chavez the Face of a Movement The Cost of Making Cesar Chavez the Face of a Movement

The harrowing revelations about Chavez expose how much Latino history in the United States has been made to rest on one man.

Meet the Immigrant Workers Who Launched the First Major Meatpacking Strike in Decades Meet the Immigrant Workers Who Launched the First Major Meatpacking Strike in Decades

Amid the Trump administration’s assault on immigrant workers, thousands at the country’s largest meat processor organized across nationalities to launch a historic work stoppage.

How Gaza Broke Big Tech’s Campus Pipeline How Gaza Broke Big Tech’s Campus Pipeline

Big Tech’s complicity in Israel’s genocide in Gaza has pushed STEM students to organize for a more ethical tech industry.