AI Goes to War

Automated targeting, autonomous weapons, and nuclear decision-making.

Last July, the Pentagon’s chief digital and artificial intelligence officer, Doug Matty, announced awards of $200 million each to four of America’s leading tech companies—Anthropic, Google, OpenAI, and xAI—to supply advanced AI models to the Department of Defense. “Leveraging commercially available solutions into an integrated capabilities approach will accelerate the use of advanced AI as part of our Joint mission essential tasks in our warfighting domain,” Matty said when announcing the awards. Beyond this, very little information was provided about the awards, except that they were intended to exploit recent advances in generative AI—sophisticated software that can digest vast amounts of data and provide operators with suggested courses of action.

In the months that followed, the Pentagon continued to impose a shroud of secrecy over the multimillion-dollar AI awards, citing national security considerations. At the end of February, however, this shroud was broken, at least in part, when Anthropic insisted on imposing certain limits on the military use of Claude, its premier AI model. “I believe deeply in the existential importance of using AI to defend the United States and other democracies,” Anthropic CEO Dario Amodei affirmed on February 26. “However, in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values.” These included, he noted, the use of AI in “mass domestic surveillance” and the creation of “fully autonomous weapons,” or self-guided combat drones.

Senior Pentagon officials responded to Amodei’s statement by insisting that they had no intention of using AI for domestic surveillance and that unmanned weapons systems will always remain under human oversight. They affirmed, however, that private firms like Anthropic could not impose restrictions on how the Pentagon employs AI. “We won’t have any BigTech company decide Americans’ civil liberties,” declared Emil Michael, the undersecretary of defense for research and engineering. At the same time, however, Michael broadened the discussion by identifying another potential use of AI: to help shoot down enemy missiles in a nuclear war. Would Anthropic oppose Claude’s use in nuclear operations? Michael asked Amodei during one set of negotiations. (Amodei reportedly said no.)

The Anthropic-Pentagon fight has shed considerable light on the military’s fraught relationship with the tech giants of Silicon Valley. The dispute also demonstrated the Trump administration’s fierce determination to employ AI for strategic advantage, despite widespread concerns over its safety. But however significant in their own right, these aspects of the Anthropic-Pentagon dispute are not the most important to have been unveiled. What is more revealing, in the long term, is what it tells us about the uses to which AI is being put by the US military. As suggested by Amodei’s concerns and Undersecretary Michael’s retort, there are three areas we should be looking at: the use of AI in mass surveillance and automated targeting; lethal autonomous weapons systems; and the integration of AI into nuclear weapons control systems.

Surveillance and Targeting

When the Department of Defense first explored the utilization of artificial intelligence for military use, in 2017, its focus was highly specific: to reduce the cognitive burden of human drone pilots conducting search-and-kill missions against Middle Eastern insurgents by automating the task of searching through video footage for signs of enemy hideouts. To accomplish this mission, the Pentagon created the Algorithmic Warfare Cross-Functional Team, or Project Maven. The head of Maven, Air Force Lt. Gen. John (“Jack”) Shanahan, then turned to Google to generate the required software. When thousands of Google employees signed a petition opposing the company’s involvement in a military-oriented project of this sort, the company’s leadership then chose to terminate its contract for Maven, Shanahan reassigned the work to Palantir, a defense-oriented startup chaired by Peter Thiel, a conservative-leaning billionaire investor. Palantir then developed the algorithms that enabled Maven software to identify potential targets for attack by armed Predator drones.

Although intended originally for the task of identifying militant hideouts, Project Maven morphed over the years into a program for collating multiple streams of data—including news feeds, government records, and social media accounts—in order to identify, habits, family ties, and political views of potential adversaries. When made available to combat units, this information that could then be used for lethal operations against hostile leaders and their subordinates.

In 2022, oversight responsibility for Project Maven was transferred to the National Geospatial-Intelligence Agency, a little-known Pentagon entity responsible for interpreting the imagery provided by satellites and surveillance aircraft, allowing Palantir to incorporate detailed maps into the software, now rechristened the Maven Smart System (MSS). At that time, the US Central Command (Centcom) was equipped with MSS, giving it access to detailed information on potential enemy targets throughout the Middle East. Was this technology used in designating targets during the recent US strikes on the Iranian leaders? It is hard to imagine otherwise.

Now we come to Dario Amodei’s fears about domestic surveillance: Last August, US Immigration and Customs Enforcement (ICE) contracted with Palantir to employ its technology in seeking out undocumented immigrants for detention and deportation. Using a Palantir-designed system called ImmigrationOS, for Immigration Lifecycle Operating System, ICE can generate a dossier on potential deportation targets by drawing on passport records, Social Security files, IRS tax data, and other government databases. Recently, ICE has also begun using AI and facial recognition technology to identify and track anti-ICE protesters for possible arrest and prosecution as “domestic terrorists.” So far, there is no indication that the Department of Defense has joined this effort, but Amodei clearly has good reason to fear the utilization of AI in domestic surveillance operations.

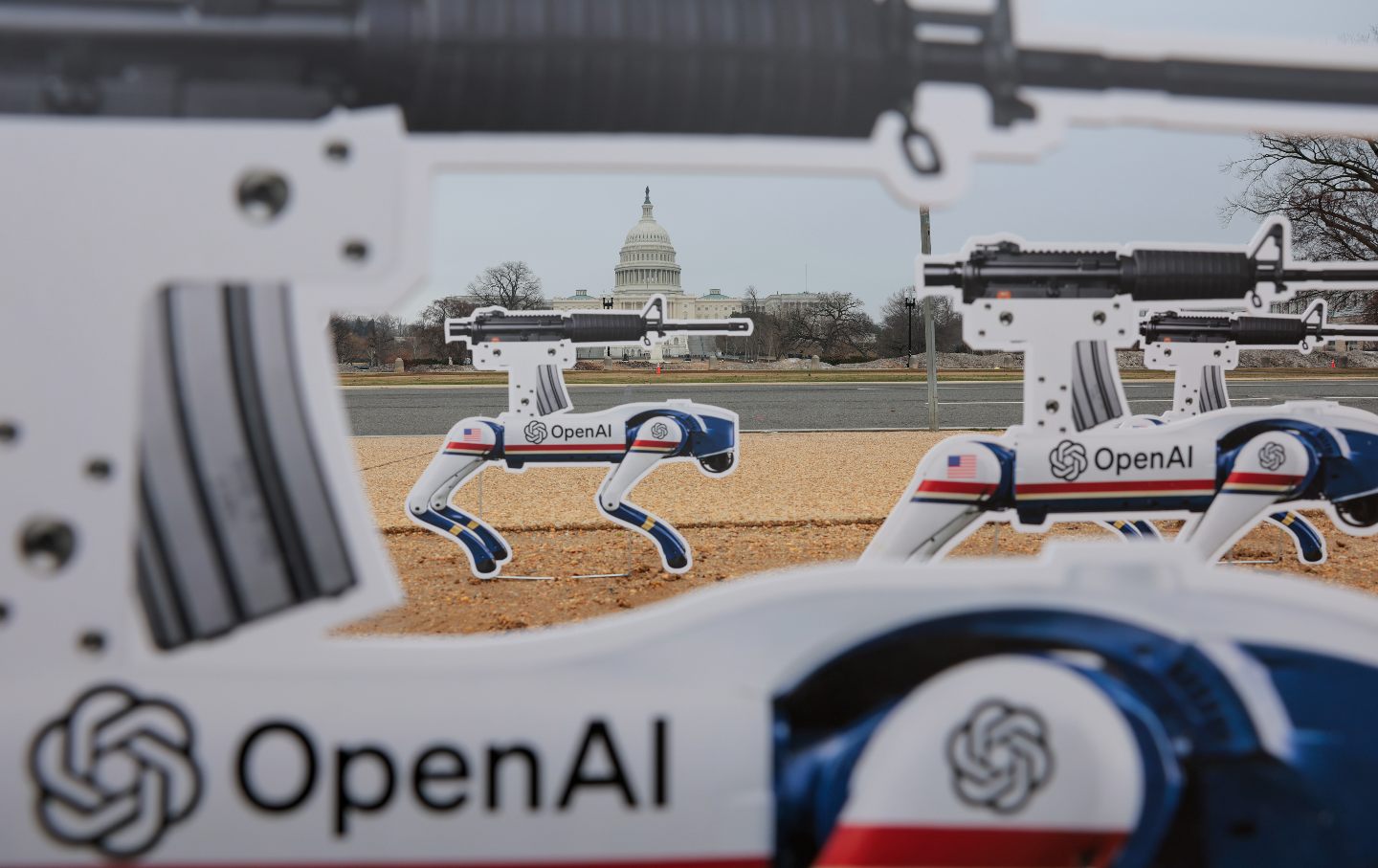

Lethal Autonomous Weapons Systems

The other area identified by Amodei as of concern, autonomous weapons, also entails significant dangers. Spurred by the widespread use of combat drones in Gaza, Ukraine, and other recent conflicts, the Pentagon has sought to field a wide array of unmanned weapons systems—unmanned aerial vehicles (UAVs), unmanned ground vehicles, unmanned surface vessels, and unmanned subsea vessels. Such devices, it is widely believed, can be deployed in especially hazardous front-line operations, thereby reducing the risk to human combatants.

At present, most of the unmanned combat systems in US arsenals are designed to be remotely controlled by human operators. Although the employment of such systems would reduce the exposure of their human operators to enemy fire, it would not reduce the cognitive demand of such operations nor allow for the massing of unmanned vehicles in offensive assaults. To overcome this deficiency, Pentagon officials seek to invest drones with a high degree of autonomy, allowing them to operate in swarms with minimal human oversight. Under a program called Collaborative Operations in Denied Environment (CODE), the Defense Advanced Research Projects Agency (DARPA) has developed software enabling groups of UAVs to “find, track, identify, and engage targets” on their own, so long as they abide by preset “rules of engagement.”

Official Pentagon policy, as articulated in Department of Defense Directive 3000.09, stipulates that autonomous weapons “will be designed to allow commanders and operators to exercise appropriate levels of human judgment over the use of force.” Many analysts fear, however, that this wording provides too much leeway for the military services to employ DARPA’s technology in a way that greatly diminishes human oversight. This, in turn, could result in the unintended slaughter of civilians by “rogue” autonomous weapons. “Without proper safeguards, AI models could cause all kinds of unintended harm,” former undersecretary of defense Michèle Flournoy wrote in Foreign Affairs. “Rogue systems could even kill US troops or unarmed civilians in or near areas of combat.”

In recognition of this danger, a coalition of human rights organizations, the Campaign to Stop Killer Robots, and many governments have called for a legally binding international ban on the development and deployment of autonomous weapons. However, the United States (along with Israel and Russia) has opposed any such constraints, claiming that unilateral measures, notably Directive 3000.09, are sufficient to prevent misuse. Here again, Amodei’s reluctance to trust the Pentagon in this regard is telling.

Nuclear Command and Control

Finally, there was that brief interchange between Amodei and Michael regarding the use of AI in nuclear weapons command, control, and communications, or NC3. Neither figure elaborated on this aspect of AI’s use by the military, but it is the one deserving of our greatest concern.

Popular

“swipe left below to view more authors”Swipe →The existing NC3 architecture was created during the Cold War era to ensure that the president receives notice of an impending enemy nuclear strike and is able to order a commensurate counterattack. Many of these systems incorporate obsolete technology, and the entire NC3 system is being modernized at an estimated cost of $154 billion over the next 10 years. As part of this modernization, AI is being integrated into every aspect of NC3, potentially diminishing the role of humans in nuclear decision-making.

From what can be determined from unclassified sources, AI will be used to calculate the trajectory of enemy missiles and help interceptor missiles collide with them. (A failed attempt at such an interception is portrayed in the Netflix movie A House of Dynamite.) Once an enemy assault is detected, moreover, AI will be used to generate possible US responses, ranging from limited counterstrikes to full-scale retaliation. This poses a danger that AI programs will miscalculate the nature and extent of enemy actions and/or generate excessively escalatory courses of action, deterring leaders from seeking alternatives to mutual annihilation.

Advanced AI models like Claude, ChatGPT, and Meta’s Llama are capable of many wondrous feats but are also known to malfunction at times, producing fabricated responses, or “hallucinations,” when prompted by human interrogators. When tested in war games, moreover, all of these models have displayed a tendency to favor escalatory actions in a crisis situation, including the precipitous use of nuclear weapons. It is absolutely essential, then, that humans retain oversight over every step in the nuclear decision-making process.

Your support makes stories like this possible

From illegal war on Iran to an inhumane fuel blockade of Cuba, from AI weapons to crypto corruption, this is a time of staggering chaos, cruelty, and violence.

Unlike other publications that parrot the views of authoritarians, billionaires, and corporations, The Nation publishes stories that hold the powerful to account and center the communities too often denied a voice in the national media—stories like the one you’ve just read.

Each day, our journalism cuts through lies and distortions, contextualizes the developments reshaping politics around the globe, and advances progressive ideas that oxygenate our movements and instigate change in the halls of power.

This independent journalism is only possible with the support of our readers. If you want to see more urgent coverage like this, please donate to The Nation today.

More from The Nation

Reflections on Hungary as Viktor Orbán Exits Reflections on Hungary as Viktor Orbán Exits

Do conditions for a pluralistic rebirth exist?

Even in This Chaotic Moment, We Can’t Forget About Central America Even in This Chaotic Moment, We Can’t Forget About Central America

Communities in World Cup host cities across the United States are organizing to ensure that the tournament lives up to its promise of making soccer a force for good.

I Partied With the Next Generation of the British Right I Partied With the Next Generation of the British Right

UK conservatism is changing dramatically, as I discovered when I hung out with some of the right’s youngest, drunkest up-and-comers.

The Immeasurable Endurance of the Women of Gaza The Immeasurable Endurance of the Women of Gaza

Women here have become both the primary caretakers and providers, sustaining their families in the absence of husbands, fathers, and sons.

Why Is Everybody in Russia Talking About Victoria Bonya? Why Is Everybody in Russia Talking About Victoria Bonya?

The Russian blogger goes viral telling Vladimir Putin what everyone has on their minds—but is afraid to say.

Trump Risks a Greater Catastrophe in the Iran Conflict Trump Risks a Greater Catastrophe in the Iran Conflict

Considering the costs so far as we wait on the precipice of another round of fighting.