Boodle & Airline Security

The attacks of September 11 have not only exposed the failures of our intelligence apparatus and the "blowback" problem of US foreign policy. They have also stripped bare how o...

Print Magazine

Purchase Current Issue or Login to Download the PDF of this Issue Download the PDF of this Issue

The attacks of September 11 have not only exposed the failures of our intelligence apparatus and the "blowback" problem of US foreign policy. They have also stripped bare how o...

The press conference that Defense Secretary Donald Rumsfeld held shortly after the United States began bombing Afghanistan on October 7 was painful to behold. The questions pos...

President Bush has stated that his global campaign against terrorism will be a "new kind of war," in which traditional military approaches will give way to a more innovative mi...

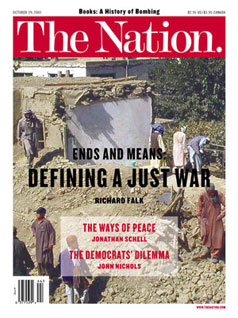

The war in Afghanistan, coming after the atrocities of September 11, provokes a welter of contradictory emotions. On the one side, a desire for justice and a yearning for secur...

As the war on terrorism gears up, governments around the world are already justifying repression in the name of that cause, while the Bush Administration and its allies send sig...

As I write, the world is filled with fear. I am having one of those reactions that psychologists describe as a stress response. I suppose I'm not alone, though. A friend calls...

The new war on terror isn't going to be of much use in combating the present plunge in America's well-being. Well before the twin towers fell to earth the country was entering...

Patriotism requires no apologies. Like anti-Communism and anti-Fascism, it is an admirable and thoroughly sensible a priori assumption from which to begin making more nuanced ...

What do we bomb next? Saudi Arabia? The Saudis would be a logical target if President Bush were serious about his stated goal of punishing nations that support terrorism.

Joe Stiglitz is no fan of Washington consensus-style globalization. Read ...

After weeks of evasion and deflection, reminiscent of two illicit lovers keen to avoid scandal, the United States and Uzbekistan announced on October 12 that they had made a de...

Liza Featherstone will be reporting periodically on the antiwar movement for The Nation. This article is part of the Haywood Burns Community Activist Journalism series, ...

For Hana Amichai

Inside a domed room photos of children's faces

turn in a candlelit dark as recorded voices

recite their names, ages and national...

The marching order to "leave nothing but footprints" enlisted an infantry of green builders this season, before our collective attention turned to security. While our man from...

After the terrorist attacks on New York and Washington, some have asked whether the West hadn't sown the seeds of its own destruction. That's not a new idea: A hundred years ag...