The Butterfly Election

On Tuesday, November 14, exactly one week after Election Day (and with no President yet in sight), a notable though little-noted disclosure was made to the public. I do not me...

Print Magazine

Purchase Current Issue or Login to Download the PDF of this Issue Download the PDF of this Issue

On Tuesday, November 14, exactly one week after Election Day (and with no President yet in sight), a notable though little-noted disclosure was made to the public. I do not me...

As rain dances used to serve certain primitive tribes and scripture still serves true believers, the two-party system serves as the religion of the political class. Never mind...

A proposed 14.2 percent postage increase for periodicals was swept aside by the Postal Rate Commission in a recommendation issued on November 13. The five-...

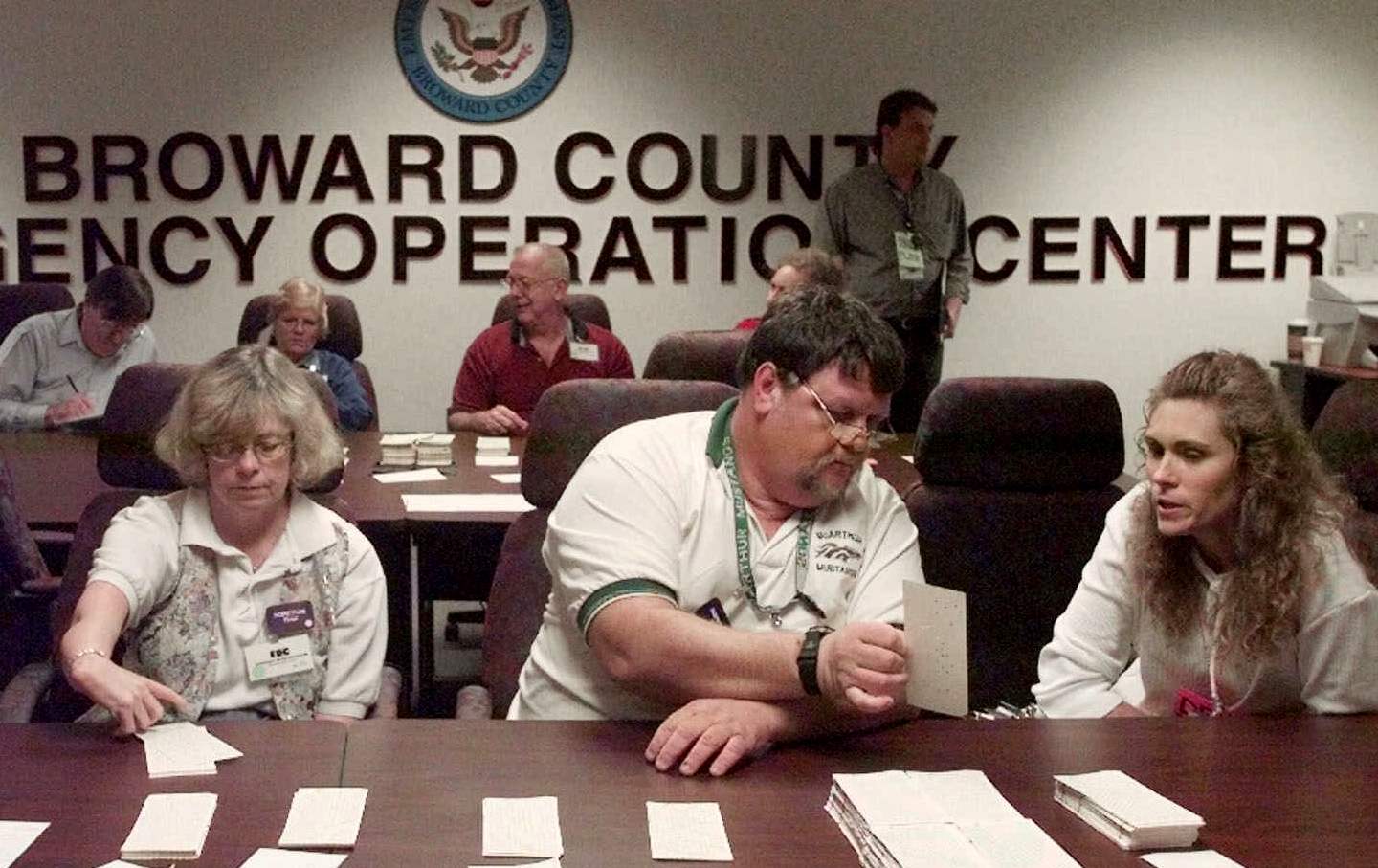

When you read this, George W. Bush may be President, which will most likely mean that his lawyers, his brother Jeb and his Florida campaign co-chair and ambassadorial wannabe ...

In Texas, vote-counters routinely count a dimpled chad as a vote for the candidate because it clearly establishes the voter's intent.

Three weeks ago, that senten...

There's an easy way to take your own pulse, and that of anyone you know, concerning the vertiginous events of the night of November 7. Was the apparent non-outcome really a &qu...

That year there were disputes over the presidential returns in South Carolina, Louisinana, Oregon and Florida.

Long before Carrie-Anne Moss rips open Val Kilmer's shirt and begins pounding his chest, providing him with a version of CPR that she must have learned from a Japanese drum tr...

The quiet grace of Ring Lardner Jr., who died the other week at 85, seemed at odds with these noisy, thumping times. I cannot imagine Ring playing Oprah or composing on...

To buy or not to buy turns out to have been the question of the century in America--Just Do It or Just Say No. And in the past fifteen years, consumer society has moved to the...